AI Works. The Hard Part Is Deployment.

When placed and implemented correctly, LLMs deliver substantial business value - reduced workload, increased revenue, lower operational costs.

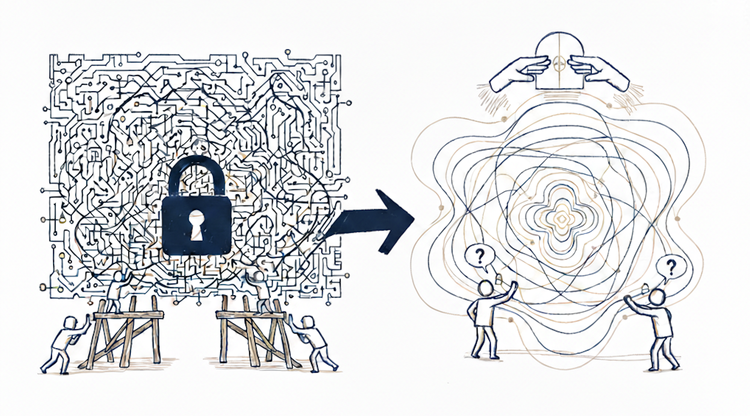

Sustaining that value requires understanding what deployment actually demands. The "invisible" work humans provided naturally does not disappear when LLMs replace them. It converts into technical and organizational infrastructure that must be built, maintained, and staffed.

This is not an obstacle. It is a strategic filter. Teams that understand this can place AI where the value justifies the investment. Teams that do not, discover the cost too late.

The filter becomes concrete through three questions: How do we get this working? How do we make it reliable? How do we make it sustainable?

What each answer demands - the architecture, the operations, the coordination - reveals what teams are actually signing up for.

In practice, this means every architectural constraint we explored in the first Understanding Intelligence series - together with the "invisible" capabilities humans provided - must be rebuilt as software, processes, and organizational structure. What follows is what that reconstruction actually looks like.

Phase 1: Getting It Working

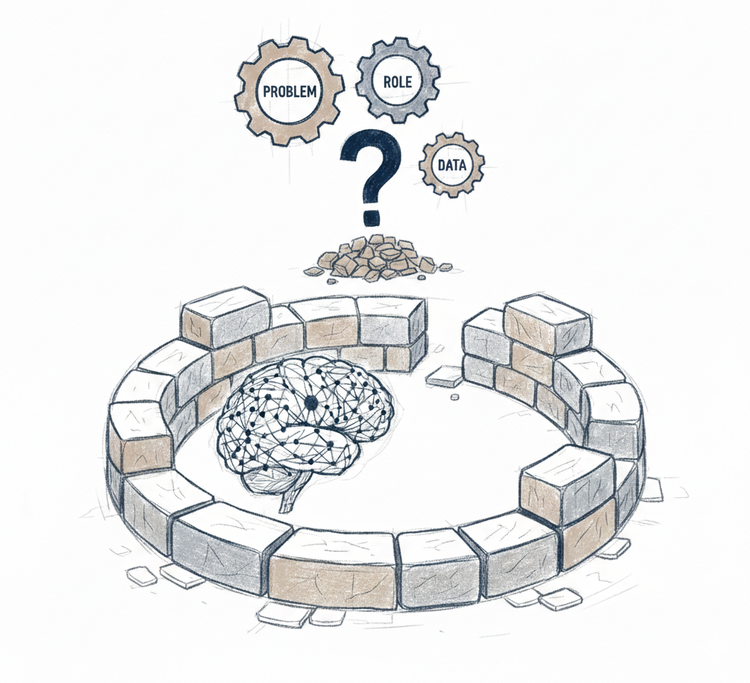

Teams start with what seems straightforward: use an LLM to handle subscription upgrades. High volume, clear patterns. The use case makes sense.

Provider services - OpenAI, Anthropic, or similar. Just API integration, right?

Then they begin building what actually makes it work.

Prompt engineering encodes key judgment patterns - when to escalate, what thresholds matter, basic constraints.

Retrieval systems provide access to previous cases and context - customer history, policy documentation, past decisions.

Memory systems maintain ongoing state - conversation history, customer preferences, accumulated context across interactions.

Tool integration connects the LLM to backend systems - it can process upgrades, update accounts, trigger workflows.

Security guards against prompt injection and data leakage.

Review queues catch high-uncertainty cases for manual approval.

Logging tracks decisions for debugging and investigation.

The operational work begins: Engineering monitors system health. Operations reviews escalated cases. Product tracks decision quality. Someone owns accountability when issues surface.

It works. The promised value materializes - reduced workload, greater capacity, teams on higher-value work.

Early wins.

Then Reality

First weeks surface what planning missed.

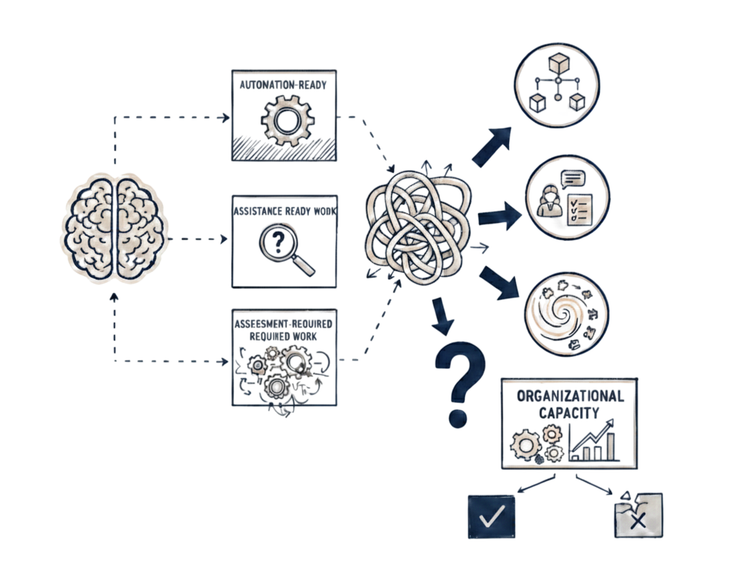

The expected automation rate (80%) lands closer to 30%. But even 30% of decisions handled at scale without human intervention is substantial value. Human effort shifts from routine execution to judgment-heavy cases.

Edge cases proliferate. Legacy pricing during promotions. Custom contracts with unusual terms. Policy exceptions that existed but were not documented. Each one either gets escalated or requires a new constraint.

Costs surprise. Token usage runs 3x projections. Some requests involve extensive context - long conversations, multiple document retrievals, complex reasoning. The cost model assumed averages. Reality has a long tail. Either budgets need revision or context needs limiting - risking quality.

Quality varies unpredictably. Technically correct responses miss operational context. Decisions are valid but unwise. The gap between "correct" and "good" becomes visible.

Stabilization

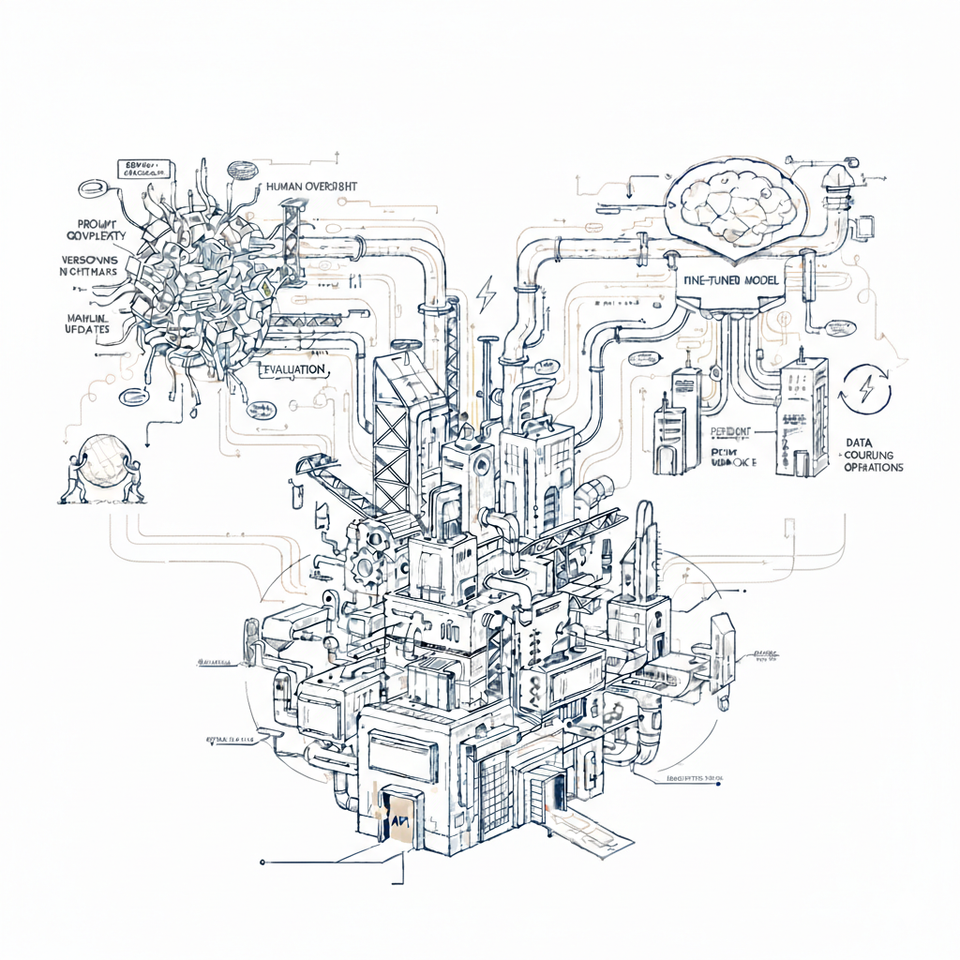

Teams respond with adjustments - refined prompts, tuned confidence thresholds, added guardrails, cost controls, memory policies. Each fix solves immediate problems while revealing deeper requirements.

Infrastructure complexity emerges. Prompts need versioning and rollback. Retrieval quality becomes critical - wrong context misleads. Memory management reveals hard choices about retention and conflict resolution. Tool integration needs validation and error handling - backend failures, partial completions. Review queues need confidence calibration. Logging expands beyond debugging - attribution, cost tracking, model version tracing. Simple components become sophisticated systems.

Phase 1 ends with a working system.

This is the first layer of converted coordination.

This is just getting it working.