AI Capability Was the Breakthrough. Placement Is the Game.

LLM demos impressed everyone. Context understanding. Great reasoning, planning, execution...The capability is real.

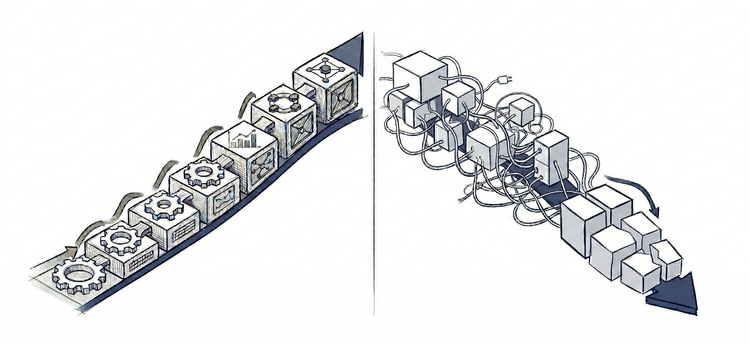

Then production. Same capability. Different outcome.

Perpetual engineering. Expanding operational burden. Costs that only increase. Infrastructure that grows more complex and expensive than the work it replaced.

The difference is predictable. Demos show capability. They reveal less about placement viability.

Many factors shape LLM placement decisions - cost, latency, data, regulations... All relevant. But they do not determine long-term viability.

What matters is whether the placement remains viable or its complexity and operational burden compound until they exceed the value it delivers, or make it too brittle to sustain. Get this wrong, and nothing else matters.

This framework reveals the structural forces that determine that outcome - before deployment.

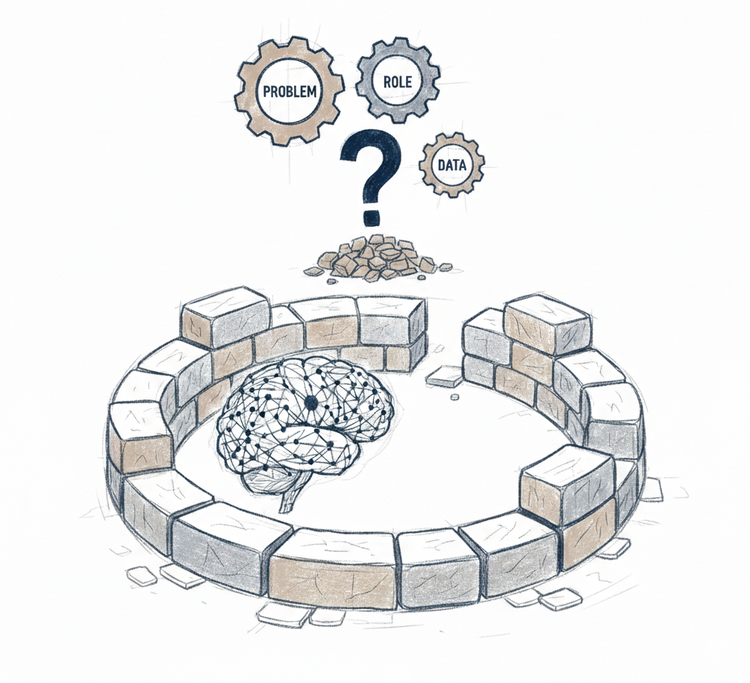

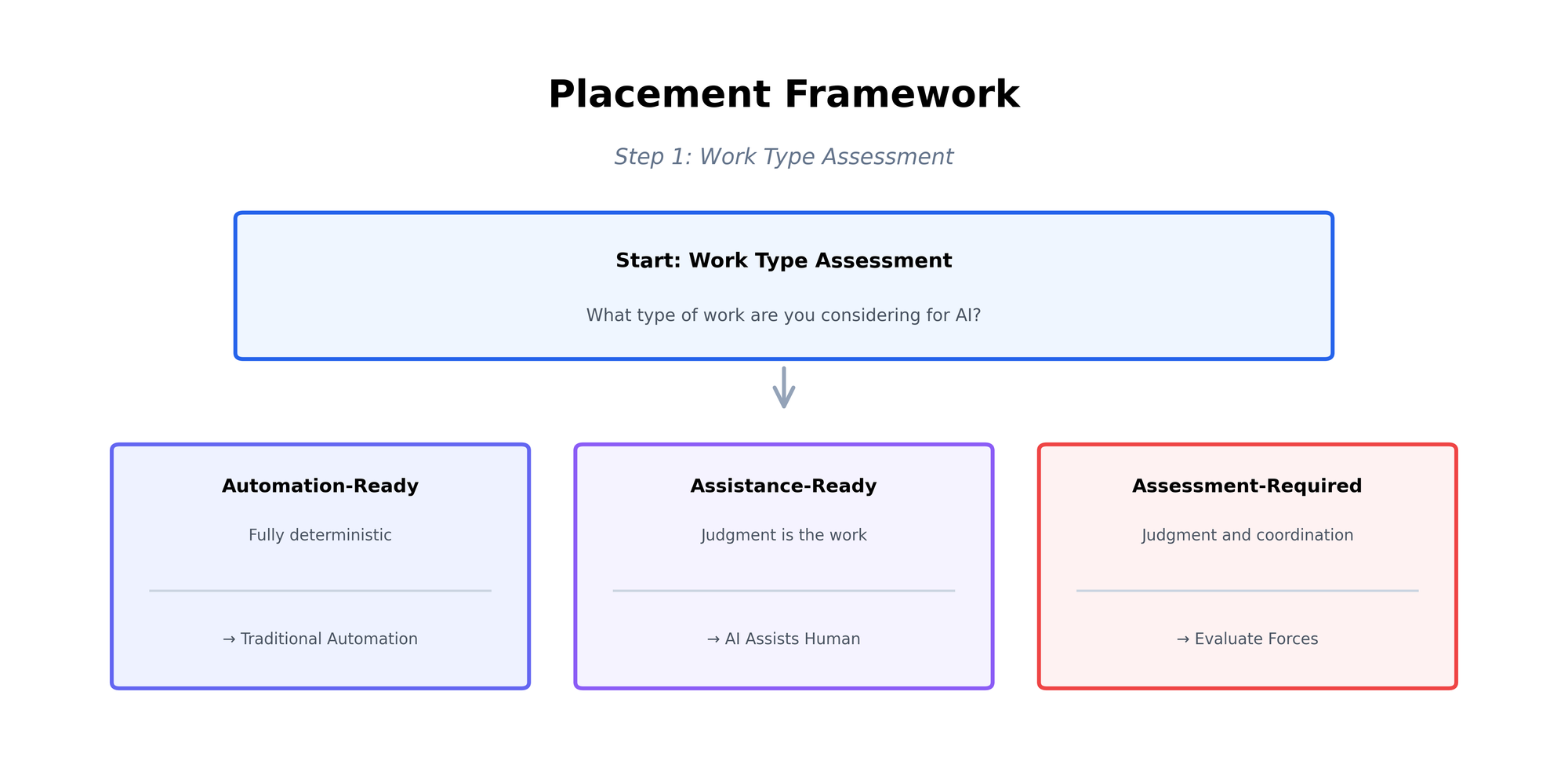

See the Work, Choose the Approach

Three types of work exist when placing AI.

Automation-Ready Work where logic can be explicitly specified. Billing calculations, data validation, account provisioning. Any required judgment has been converted to deterministic rules.

Assistance-Ready Work where judgment is the work itself. Strategic resource allocation, approvals, crisis response. High-stakes decisions requiring consequence-grounded judgment, adaptation, and accountability - that must stay with humans.

Assessment-Required Work that appears to be pure task execution but requires humans and coordination. Customer support looks straightforward until legacy contracts appear. Subscription upgrades seem routine until promotional pricing interacts with enterprise agreements. Content moderation looks like rule application until context matters more than rules. Requires human judgment and capabilities that naturally absorb coordination burden. With AI, judgment must be encoded and coordination managed through explicit infrastructure.

The type determines the approach.

Deploy traditional automation for Automation-Ready Work - cheaper, simpler, more reliable.

Use AI to assist Assistance-Ready Work - informing decisions, surfacing patterns, generating options. Keep human ownership of outcomes.

Assess Assessment-Required Work before deploying AI. This is where real placement risk lives. Demos show task execution, not the human judgment and coordination need. Most placement failures happen here - misreading work type or choosing the wrong approach.

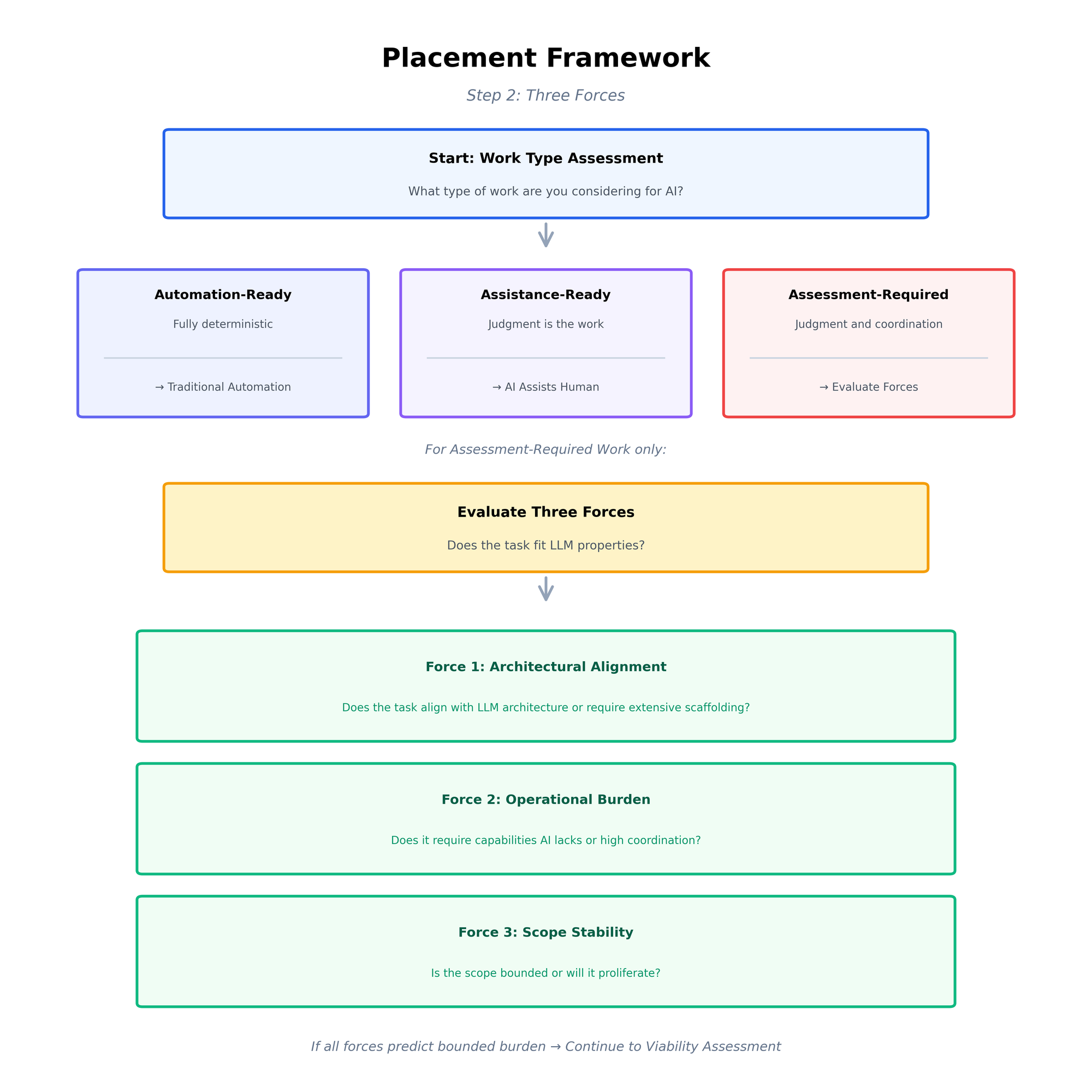

Assessment-Required Work requires deeper evaluation. Three forces strongly indicate whether managing its requirements stays viable.

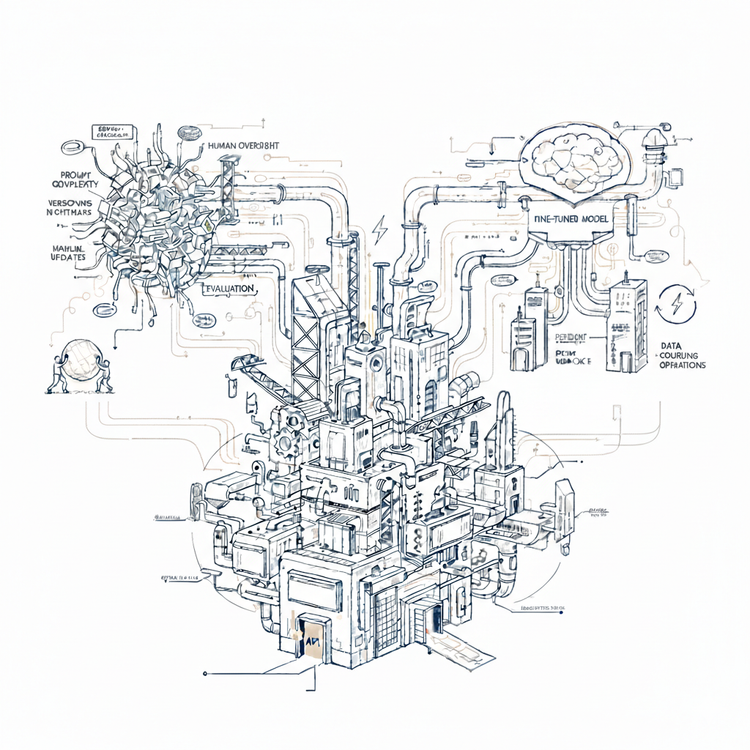

The Three Forces

Some Assessment-Required placements stay manageable. Others compound until burden exceeds value. The difference is predictable from three structural forces.

Force 1: Architectural Alignment

Research synthesis aligns with LLM architecture. Aggregate findings from 50 sources on a technical topic. Knowledge is description-based - what LLMs learn from during training. Each synthesis request is self-contained - no memory across sessions, no persistent goals, no ongoing adaptation. Generate synthesis, task complete. The architecture enables the work naturally.

Long-term project coordination requires capabilities LLM architecture does not provide. Coordinate multi-week software migration across teams. Requires memory across weeks, persistent goals, practical grounding, continuous adaptation based on outcomes. Choosing this task means engineering every missing capability - memory systems, retrieval, orchestration, validation, guardrails, retraining. The burden compounds as requirements and domain evolve.

The signal: If extensive technical scaffolding is required just to get started, the task misaligns with LLM architecture. Many organizations cannot build or sustain this infrastructure. Mismatched tasks create expense that begins at deployment and persists.

Force 2: Operational Burden

Two dimensions determine operational burden: human capabilities LLM lacks and coordination intensity.

Invoice data extraction requires little of either. Extract line items, amounts, dates, vendor information from invoices. LLM processes documents and outputs structured data. Errors are visible - amounts do not match, dates malformed. Consequences contained - caught in validation. No cross-functional coordination needed. Light oversight suffices.

Policy exception handling demands both. Customer requests refund outside standard policy window. Requires human capabilities LLM lacks: consequence-grounded judgment about precedent and revenue impact, accountability for outcomes, self-correction when errors surface. Requires high coordination intensity: customer success, finance, legal, sometimes leadership must align on every exception through escalation paths and approval chains.

When tasks require capabilities LLM lacks OR high coordination intensity, permanent operational infrastructure compensates. Continuous human review. Multi-stakeholder coordination processes. Escalation management. Accountability tracking. The operational burden is substantial from deployment and usually does not scale down.

The signal: If you are planning for human intervention (judgment calls, oversight, corrections) or coordination infrastructure (review queues, escalation paths, approvals, audit trails), you are compensating rather than automating. The operational burden is permanent.

Force 3: Scope Stability

Customer support triage has bounded scope. Classify incoming tickets and route to appropriate teams. Billing issue routes to finance. Technical error routes to engineering. Account access problem routes to customer success. Variations exist: tickets spanning multiple categories, ambiguous language, edge cases. These are finite and discoverable. The problem space is knowable upfront - classification categories and routing logic can be enumerated. Coverage increases over time until complete.

Enterprise contract negotiations have proliferating scope. Customer requests mid-cycle upgrade with legacy discount. Legacy discount interacts with current promotional pricing. Enterprise tier definitions changed last quarter. Decision depends on precedent, strategic account status, competitive pressure, revenue recognition timing - factors that keep emerging. The problem is open. Unstated context matters. Each scenario creates new combinations. Rules interact unpredictably. Coverage does not reach complete despite continuous additions.

Bounded scope stays manageable. Proliferating scope compounds - new cases keep appearing, rules multiply, teams react with more constraints and validation layers. Maintenance burden compounds fast until maintaining the system costs more than the value it provides.

The signal: If you cannot enumerate all scenarios upfront, or if business changes create new combinations or cascade through existing logic, or if customer behavior is unpredictable or evolving - scope will proliferate. Coverage will not reach complete despite continuous additions.

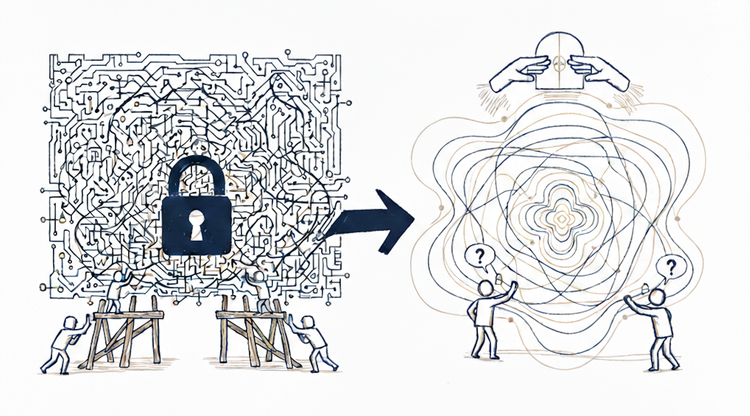

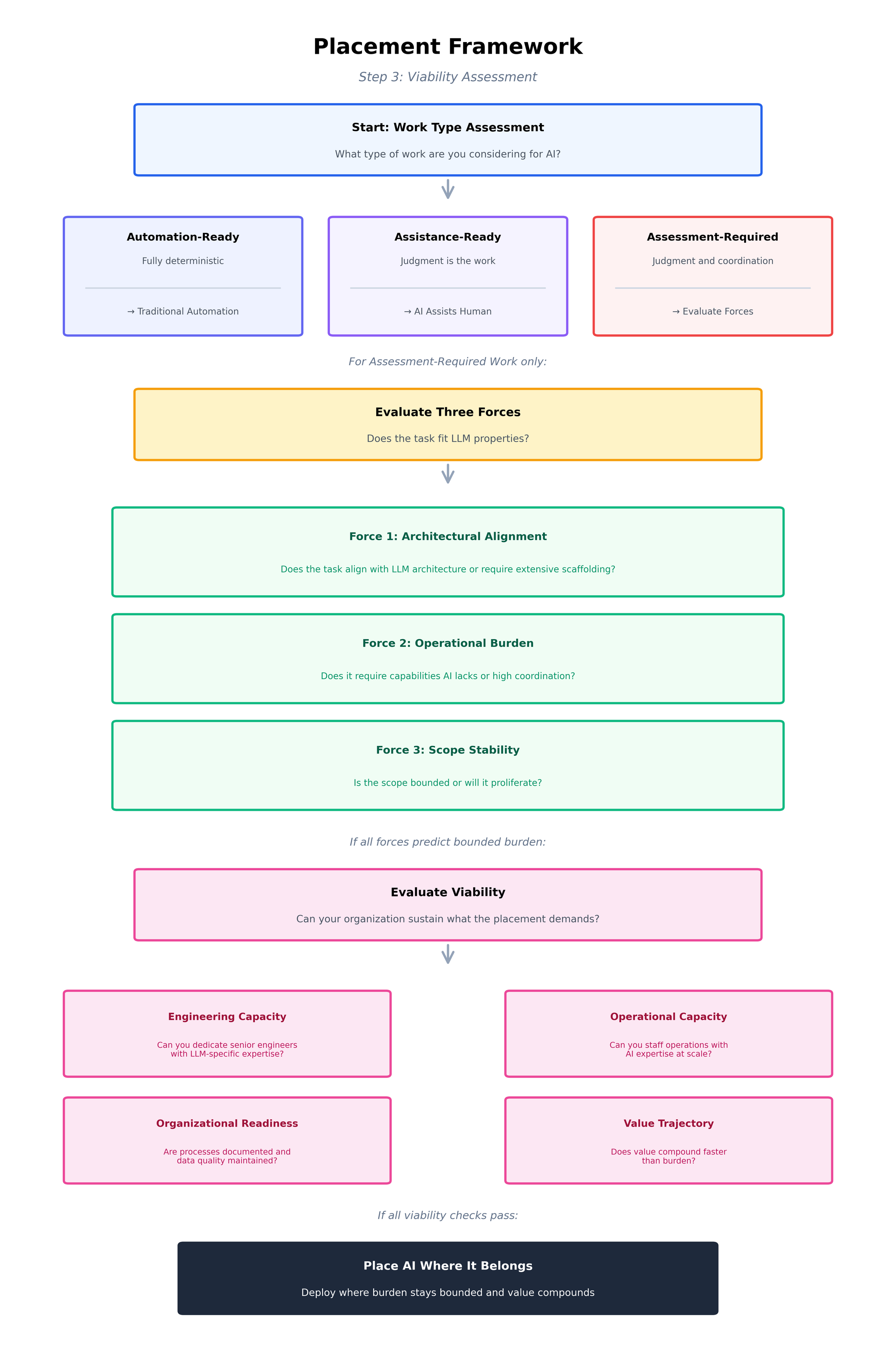

Evaluate Viability

Two organizations deploy the same LLM for the same task. Forces predict bounded placement for both. One succeeds. One fails.

The difference is organizational capability.

Engineering capacity: Architectural alignment revealed technical scaffolding requirements. Building these systems is hard. Keeping them working while models and business evolve is harder.

The work demands senior engineering talent with skills many teams lack: prompt engineering, context optimization, memory and retrieval tuning, data pipelines, evaluation and testing methodologies for probabilistic systems. Some are emerging disciplines, others established specializations - but applied to LLM deployment, even established techniques lack mature playbooks.

Test: Add this to your roadmap as permanent need, not a project. Can you dedicate senior engineers? Can you hire or develop the required skills? If this competes with core development or stretches an already-thin team, the placement fails regardless of task fit.

Operational capacity: Operational Burden revealed human oversight and coordination requirements - ongoing monitoring, review management, escalations, multi-stakeholder alignment, etc.

That work demands people who understand probabilistic AI behavior. Traditional operations teams manage deterministic systems - AI systems require different expertise: drift interpretation, calibrating confidence thresholds, distinguishing edge cases from systematic failures, etc.

Test: Model the steady-state. If Operational Burden showed heavy requirements, expect permanent staffing for oversight, escalation, and multi-stakeholder coordination. When volume scales, staffing must scale proportionally. Many teams start understaffed "until it stabilizes" and discover it never does. Can you hire or develop people with AI operations expertise? Can you sustain this trajectory?

Organizational readiness: AI amplifies what exists. Undocumented processes, poor coordination, missing or inconsistent historical data, tribal knowledge - these do not disappear with AI deployment. They scale.

Test: Explain your workflow to someone external. Assess your historical data quality. Can routine decisions be made by people without direct coordination?

If any of these reveal gaps, inconsistencies, dependence on specific people, or "we just know" patterns, AI will surface the problems at scale. Organizations that cannot document processes, maintain data quality, or coordinate systematically cannot sustain AI within them. Fix organizational basics first, or placement fails regardless of task fit.

Value trajectory: Does value compound faster than burden? If Architectural Alignment showed misalignment, engineering cost persists. If Operational Burden showed heavy requirements, staffing cost grows with volume. If Scope Stability showed proliferation, complexity cost compounds.

Test: Project costs 12 months forward. Engineering: permanent team allocation for continuous system evolution. Operations: staffing that scales with volume. Scope management: growing complexity from proliferating rules and edge cases. Compare these cost trajectories against value creation. If burden grows faster than value at any point, placement becomes unsustainable regardless of early success.

The viability signal: Organizational readiness eliminates placements before assessment begins. For viable candidates, you must clearly answer "yes" to engineering capacity, operational capacity, and positive value trajectory. Without organizational capacity, even structurally sound placements fail.

The Decision

AI capability is no longer the constraint. Placement is.

Teams that succeed assess work type, evaluate structural forces, and test organizational capacity. They place AI where burden stays bounded and value compounds - not where carefully staged demos look impressive.

They recognize that task fit does not guarantee success. Organizational capacity does.

AI capability was the breakthrough. Placement is the game. Now you have the framework to play it.

AI capability was the breakthrough. Placement is the game. The framework shows how to play it.