AI Works. The Hard Part Is Deployment.

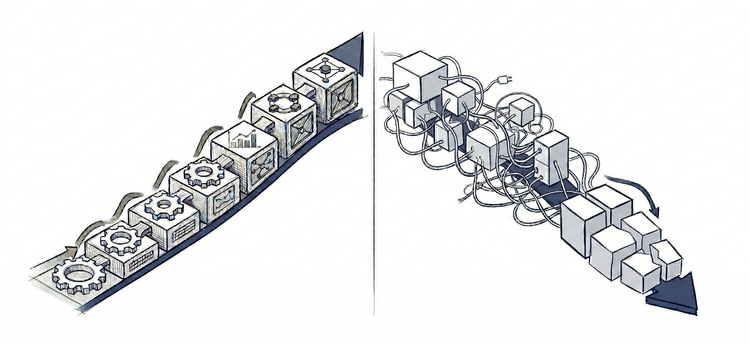

When placed and implemented correctly, LLMs deliver substantial business value - reduced workload, increased revenue, lower operational costs.

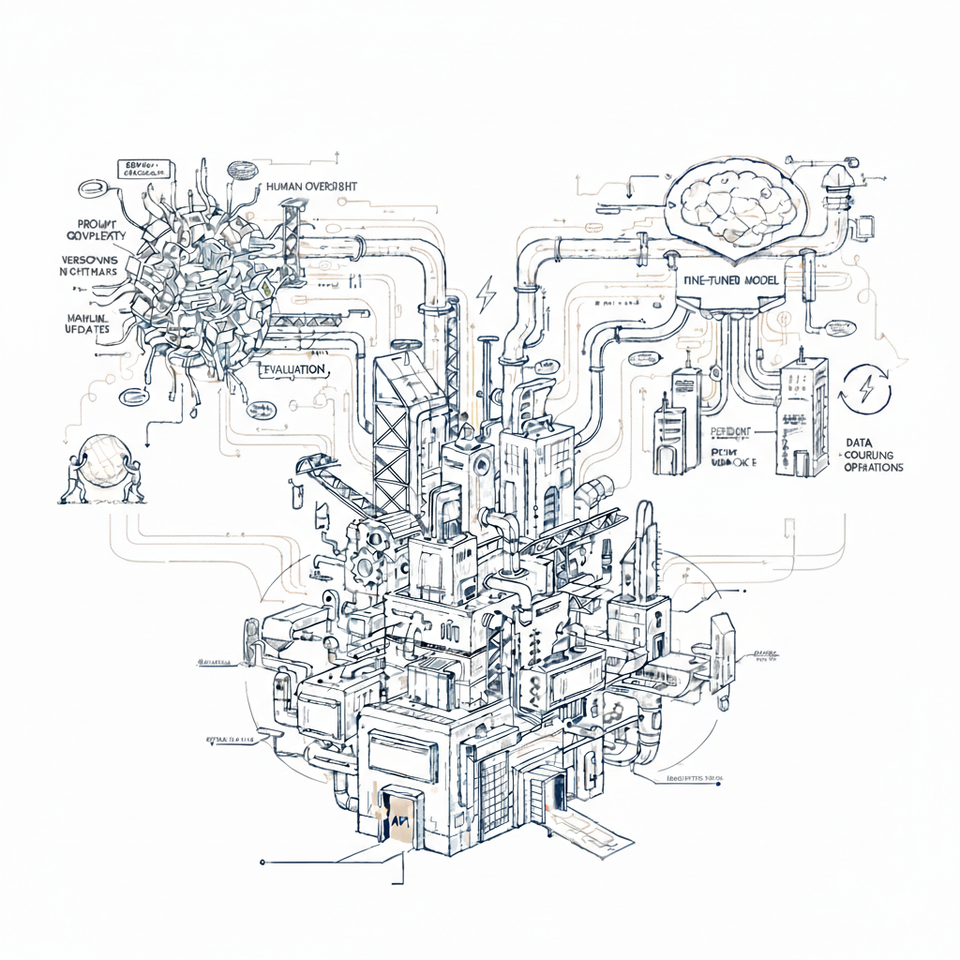

Sustaining that value requires understanding what deployment actually demands. The "invisible" work humans provided naturally does not disappear when LLMs replace them. It converts into technical and organizational infrastructure that must be built, maintained, and staffed.

This is not an obstacle. It is a strategic filter. Teams that understand this can place AI where the value justifies the investment. Teams that do not, discover the cost too late.

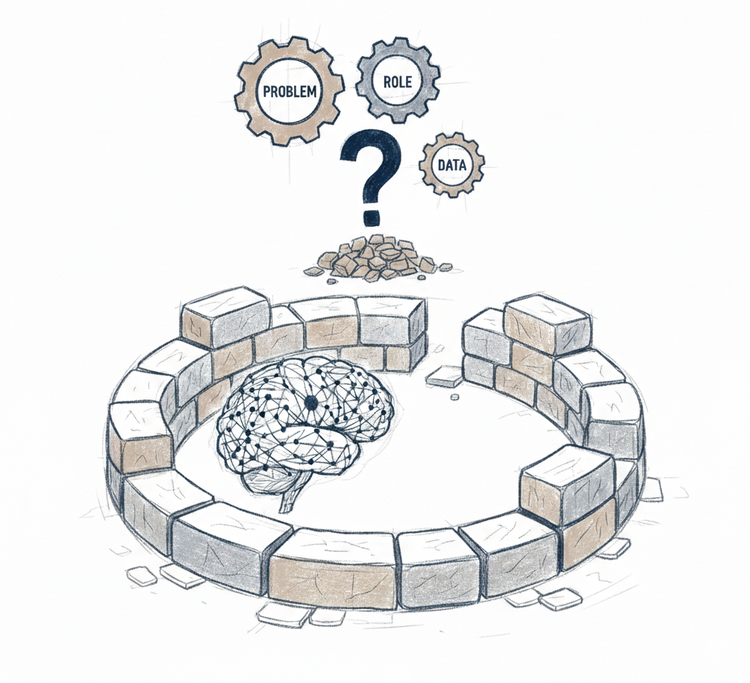

The filter becomes concrete through three questions: How do we get this working? How do we make it reliable? How do we make it sustainable?

What each answer demands - the architecture, the operations, the coordination - reveals what teams are actually signing up for.

In practice, this means every architectural constraint we explored in the first Understanding Intelligence series - together with the "invisible" capabilities humans provided - must be rebuilt as software, processes, and organizational structure. What follows is what that reconstruction actually looks like.

Phase 1: Getting It Working

Teams start with what seems straightforward: use an LLM to handle subscription upgrades. High volume, clear patterns. The use case makes sense.

Provider services - OpenAI, Anthropic, or similar. Just API integration, right?

Then they begin building what actually makes it work.

Prompt engineering encodes key judgment patterns - when to escalate, what thresholds matter, basic constraints.

Retrieval systems provide access to previous cases and context - customer history, policy documentation, past decisions.

Memory systems maintain ongoing state - conversation history, customer preferences, accumulated context across interactions.

Tool integration connects the LLM to backend systems - it can process upgrades, update accounts, trigger workflows.

Security guards against prompt injection and data leakage.

Review queues catch high-uncertainty cases for manual approval.

Logging tracks decisions for debugging and investigation.

The operational work begins: Engineering monitors system health. Operations reviews escalated cases. Product tracks decision quality. Someone owns accountability when issues surface.

It works. The promised value materializes - reduced workload, greater capacity, teams on higher-value work.

Early wins.

Then Reality

First weeks surface what planning missed.

The expected automation rate (80%) lands closer to 30%. But even 30% of decisions handled at scale without human intervention is substantial value. Human effort shifts from routine execution to judgment-heavy cases.

Edge cases proliferate. Legacy pricing during promotions. Custom contracts with unusual terms. Policy exceptions that existed but were not documented. Each one either gets escalated or requires a new constraint.

Costs surprise. Token usage runs 3x projections. Some requests involve extensive context - long conversations, multiple document retrievals, complex reasoning. The cost model assumed averages. Reality has a long tail. Either budgets need revision or context needs limiting - risking quality.

Quality varies unpredictably. Technically correct responses miss operational context. Decisions are valid but unwise. The gap between "correct" and "good" becomes visible.

Stabilization

Teams respond with adjustments - refined prompts, tuned confidence thresholds, added guardrails, cost controls, memory policies. Each fix solves immediate problems while revealing deeper requirements.

Infrastructure complexity emerges. Prompts need versioning and rollback. Retrieval quality becomes critical - wrong context misleads. Memory management reveals hard choices about retention and conflict resolution. Tool integration needs validation and error handling - backend failures, partial completions. Review queues need confidence calibration. Logging expands beyond debugging - attribution, cost tracking, model version tracing. Simple components become sophisticated systems.

Phase 1 ends with a working system.

This is the first layer of converted coordination.

This is just getting it working.

Phase 2: Making It Reliable

The system works. Weeks pass. Then reliability problems emerge that Phase 1 infrastructure cannot address.

Performance inconsistency becomes visible. The same type of request gets handled differently across instances. Small phrasing differences trigger divergent paths. Retrieval returning slightly different context creates different outcomes.

"It worked yesterday" becomes common.

Silent failures pass all checks. Constraints satisfied. No uncertainty triggered. Validation passed. But the decision is operationally unwise in ways only domain expertise would recognize. Discovered later through customer complaints or downstream impact. These failures look like success to Phase 1 infrastructure.

Confidence scoring does not predict quality. Some responses are excellent. Others miss important context. The LLM's confidence does not correlate with actual quality. High confidence responses can be tone-deaf. Phase 1's confidence scoring does not predict what matters operationally.

Edge case combinations break. Enterprise customer with promotional pricing and custom payment terms - each individually handled, but their combination creates ambiguity Phase 1's constraints did not anticipate. Reactive fixes do not compose.

Memory drift creates inconsistency. Conversation history from weeks ago contradicts current policy. Accumulated customer context from previous interactions conflicts with their current status. The LLM acts on stale context, making decisions appropriate to past reality but wrong for present state.

Making it reliable requires systematic infrastructure, not more adjustments.

Evaluation and Controls

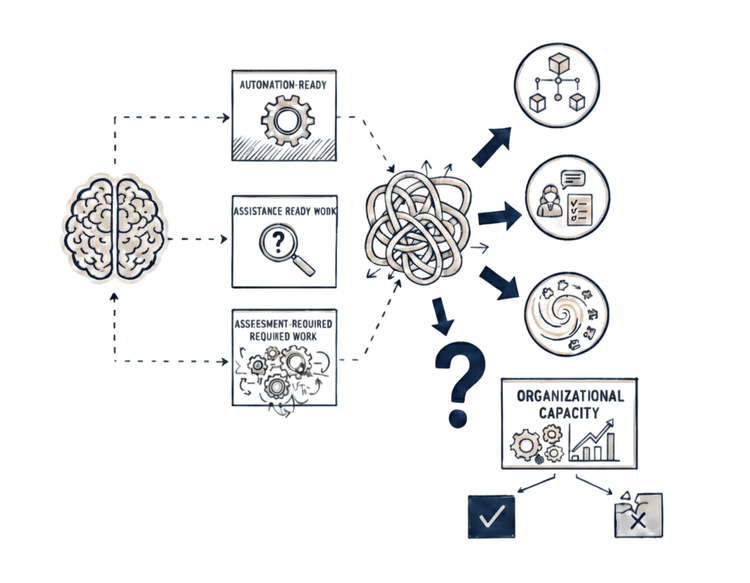

Teams either continue patching - reactive adjustments to each component as failures appear - or build systematic infrastructure. The first path leads to what Escaping the AI Rule Maze mapped - reactive scaffolding that proliferates until maintaining it exceeds the value it provides. The second requires sustained investment.

Evaluation infrastructure: Test datasets with expected outcomes - representative scenarios covering common cases and edge cases. Automated evaluation runs changes before production. But quality has dimensions automation cannot assess - appropriateness, tone, contextual fit - requiring human evaluation. Quality metrics infrastructure for tracking escalation precision, consistency, response appropriateness. Regression detection systems catching when improvements degrade other scenarios.

Sophisticated controls: Advanced confidence calibration using multi-signal uncertainty detection - model confidence, retrieval quality, scenario characteristics, historical performance. Layered guardrails with pre-execution checks (parameter validation, constraint verification), post-execution checks (outcome verification), and context-aware escalation (not just uncertainty - also customer segment, financial impact, compliance requirements). Prompt testing frameworks and rollback procedures. Output validation frameworks with semantic checks and constraint verification. Security extends across evaluation infrastructure, guardrails, and validation layers - prompt injection, data leakage, unauthorized access, and context poisoning.

The Compounding Challenge

Two systems must stay synchronized - the LLM's behavior and the constraint layers. Synchronization becomes explicit coordination work.

The operational work intensifies across functions:

Engineering maintains evaluation systems and sophisticated controls. Debugs regressions. Investigates incidents. Attribution becomes forensic work across versioned systems.

Operations conducts human evaluation continuously. Reviews edge cases that slip through automated controls. Manages escalation queues with more sophisticated triage.

Product maintains test datasets as patterns evolve. Defines quality metrics and acceptable tradeoffs. Coordinates when prompt changes require validation updates, which require test refreshes, which surface new requirements.

Testing becomes sophisticated - changes must be validated against the full system with all interactions. Test suites grow. Release velocity depends on testing capacity.

Phase 2 ends with a more reliable system for teams that invested systematically.

This is the second layer of coordination conversion - evaluation, controls, and human oversight becoming permanent infrastructure and operations.

The question becomes: what happens when the domain evolves?

Phase 3: Making It Sustainable

The system is reliable. It works within the current business workflows and rules. Then business evolves - new product tiers launch, policies change to match competitive pressure, approval requirements shift based on regulatory updates, customer patterns shift, domain terminology evolves. What worked reliably for yesterday's business requirements struggles with today's.

Phase 1 and 2 infrastructure - prompts, validation layers, test datasets, constraints - all built for current reality. Business changes cascade through everything. Prompts need new examples and revised logic. Validation layers need different thresholds. Test datasets need refreshing. Constraints need alignment with new business rules.

The coordination spans Product, Engineering, Operations, Legal. Changes that used to take days now take weeks as teams assess impact across interconnected systems.

At this point the question is no longer whether the coordination conversion works - but which form of it the organization can sustain.

The Fork

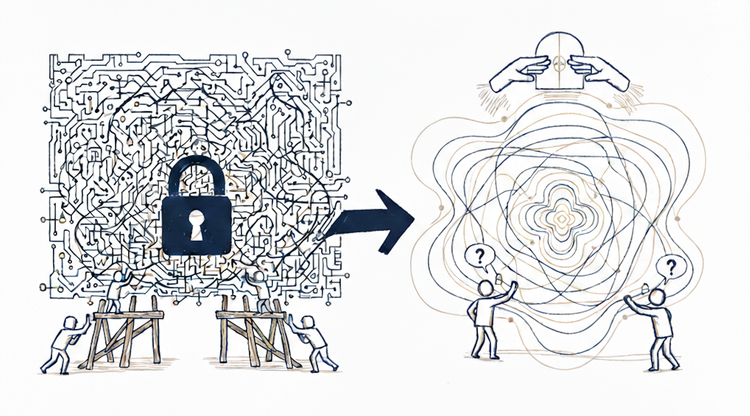

Path A: Maintain the scaffolding. Continue with base models and growing external infrastructure. Each business change means updating prompts, adjusting constraints, modifying validation logic, refreshing test datasets.

What happens:

Prompts grow complex. More examples for new scenarios. Additional conditional logic for new business rules. What started as focused instructions becomes extensive documentation of business logic.

Constraints proliferate and interact. Each new constraint must work with existing ones. Interactions become unpredictable - a change to promotional logic affects enterprise pricing which affects trial period handling.

Change velocity degrades. Simple updates now require impact assessment, validation updates, test refreshes, regression checking, coordinated deployment. Teams that could iterate daily now take weeks.

Memory management compounds. Schemas need updating as business logic evolves. Memory from old business rules misleads decisions under new rules. Each business change cascades through memory systems.

Version management becomes complex. Which prompt version works with which constraint set? Which validation logic matches which business rules? Synchronization across versioned components becomes coordination overhead that compounds with every business change.

The scaffolding that enabled Phase 1 and 2 now compounds with business complexity. Eventually, maintaining it consumes more resources than the value it provides.

Path B: Fine-tune to internalize knowledge. Move business logic into the model through retraining. Simpler prompts. Fewer external constraints. Business knowledge embedded rather than scaffolded.

What this requires:

Model drift detection. Track when the model's internalized knowledge diverges from current business requirements. Statistical methods detect when retraining is needed before performance degrades. Someone monitors continuously, investigates alerts, determines intervention timing.

Data collection infrastructure. Pipelines capturing production decisions continuously at scale. Quality controls, versioning, storage. Permanent infrastructure creating training datasets from operational experience.

Data labeling operations. Domain experts annotate which decisions were correct, which required override. Annotation guidelines, quality assurance. Labeling must keep pace with business evolution - permanent operational function.

Retraining cycles. Systematic, scheduled updates. Determining optimal frequency. Safe rollout procedures. Comparison against current version. Rollback capability when updates degrade performance. Each cycle requires comprehensive validation.

But embedding knowledge in model weights sacrifices interpretability. Path A's scaffolding is transparent - read prompts, trace constraints, understand why decisions happened. Path B's internalized knowledge is opaque. When something goes wrong, Path A lets you identify which rule broke. Path B requires inferring from model behavior. Debugging becomes investigation rather than inspection. Security auditing faces the same trade-off - under Path A, attack surfaces are traceable. Under Path B, they become partially unknowable. For teams requiring auditability, regulatory compliance, or direct control over decision logic, this loss of transparency can outweigh Path B's sustainability benefits.

Path B stays manageable as business evolves. Simpler prompts mean faster iteration. Internalized knowledge means fewer interacting constraints. Changes require retraining but do not cascade through brittle scaffolding. The choice depends on whether the organization values transparency and control or sustainable adaptation at scale.

Phase 3 reveals sustainability as deliberate choice.

This is the third layer of coordination conversion - adapting to business evolution becomes permanent organizational work.

The choice is not whether coordination continues - it does - but which form the organization can sustain.

Closing

Three phases. Three layers of coordination conversion. Each revealing what sustaining AI's value actually demands.

The gap is structural - AI operates with architectural constraints that do not resolve through engineering and lacks the human capabilities that naturally absorbed coordination work. This does not change. What changes is whether teams understand it before or after deployment.

Teams that understand what each phase demands build AI that scales - capturing value where it compounds. Teams that do not, discover the cost too late.