From Foundation to Production: Discipline Is What Makes AI Compound

The foundation is not enough. Many AI deployments that get the foundation right still fail in production. The cause is always the same - how you build on it.

The foundation determines whether you can succeed. Implementation determines whether you do.

What follows are design decisions that separate AI systems that compound value from those that compound cost and operational burden.

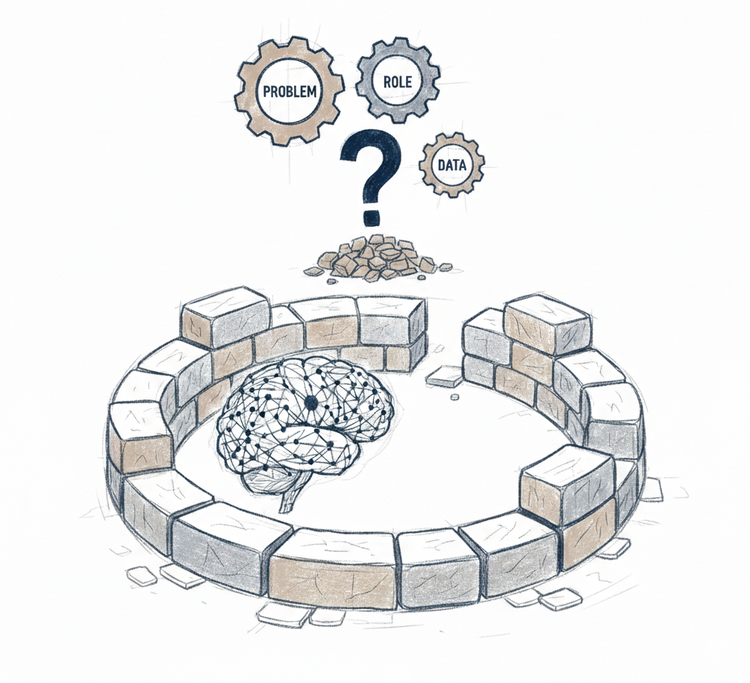

Scope Design

Placement evaluation assessed whether scope would remain bounded or proliferate. Scope design determines whether that assessment holds in practice - and what to do when it does not.

Compliance and regulatory constraints are not design variables. They are fixed boundaries that scope design must respect from the start. Everything else reveals itself through operation.

Three principles determine whether scope remains disciplined - from initial deployment through production.

Deploy To Diagnose

Many teams deploy to achieve immediate coverage. The problem: organizations amplify what they feed AI. Undocumented processes surface as inconsistent outputs. Tribal knowledge appears as unexplained performance variation. Edge cases handled informally become production failures.

Deploying narrow scope deliberately reveals how the organization actually operates before those realities compound.

"Narrow" means a single, fully contained use case where every failure is visible and attributable - not "customer support" but "password resets for standard accounts", not "claims processing" but "water damage claims under $10k with single coverage type".

When the system breaks, the failure is information. It reveals process ambiguity that was never documented, decision criteria that lived only in specific people’s heads, coordination patterns that remained invisible until the AI could not replicate them.

Starting small is not timidity. It is the cheapest way to discover what production will actually demand.

Boundaries From Operation

Most teams specify AI behavior upfront. It feels thorough. It is not - it is building on assumptions while calling them constraints.

No amount of planning reveals what production will actually demand from an AI system. Edge cases that seem critical never appear. Constraints that seemed unnecessary become essential. Ambiguities that looked manageable block entire workflows. The gap between how AI is expected to behave and how it actually behaves is only visible from operation.

Start with minimal constraints. Let the system break - deliberately and within a contained scope. Each failure reveals what specification cannot: the context the model truly needs, the constraints that genuinely apply, the points where human judgment is irreducible.

Add only what operation demonstrates is necessary. Nothing more.

Boundaries specified upfront are assumptions dressed as design. Boundaries discovered through operation are the design itself.

Capacity Before Coverage

Scope expansion feels like a coverage decision. It is not. It is a signal-reading decision.

Production tells you when expansion is viable - automation rate stable, escalation patterns predictable, operational burden quantified. These signals mean current scope is understood. Expansion from that position is grounded. Expansion from any other position is compounding what is already unresolved.

Everything else - leadership pressure, compelling new use cases, coverage targets - is noise.

Production signals contraction too. Escalation volume exceeding capacity, automation rate declining, coordination burden growing without corresponding value - scope needs to shrink, not expand. Contraction is not failure. It is scope design responding to evidence.

Scope that stays manageable creates the conditions for the next decision: how much complexity it actually requires.

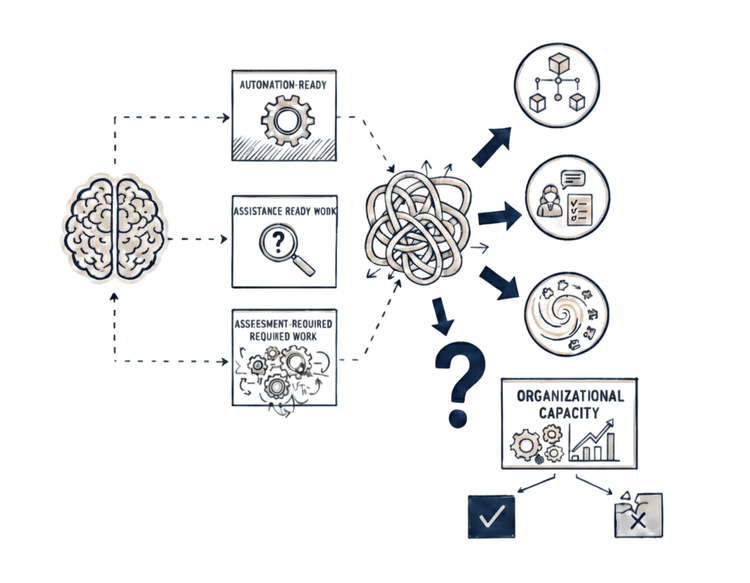

Architecture Design

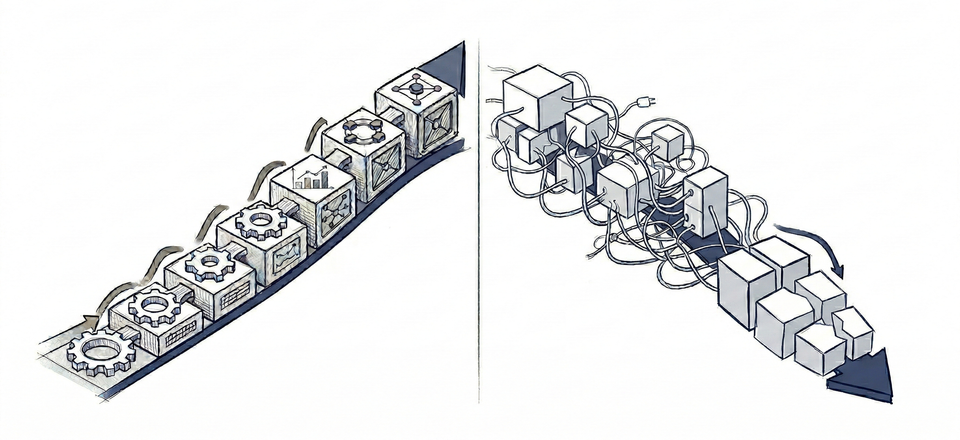

Complexity compounds when architectural choices exceed what the task actually requires.

Teams reach for multi-agent architectures before single agents are proven. They replace entire workflows with AI when most steps need deterministic logic. Or they wrap AI in so many rules and guardrails it becomes deterministic anyway - paying the cost of AI without the benefit.

Unnecessary complexity does not add capability. It adds coordination overhead, opaque failures, and maintenance burden that compounds with every change.

Architectural complexity should increase only when operation exposes a limitation that simpler systems cannot resolve.

Single Agent First

The microservices era exposed this pattern clearly. Teams decomposed systems before understanding their domain - separate services, specialized components, distributed architecture. The complexity that followed obscured requirements, created debugging nightmares, and produced orchestration burden that exceeded any value distribution provided. The lesson: start with a well-designed modular monolith, extract services only when boundaries become clear through operation.

Teams are repeating the same pattern with multi-agent architectures.

A single agent creates the conditions for understanding. One context. One model. One set of behaviors. Every failure visible, every cost traceable, every decision attributable. Modern single agents extend further than they appear - tools and skills extend what a single agent can handle: retrieval, calculation, API calls, data processing, code generation, specialized reasoning. What previously required multiple agents often does not anymore.

Start with single agent until operation proves it insufficient - not through assumption, through demonstrated capability limits. When a single agent genuinely cannot handle the task within acceptable bounds, that failure shows exactly what additional architecture solves. Complexity added at that point has demonstrated justification.

Most implementations never reach that point. What appears to require multiple agents usually requires better task scoping, clearer prompting, improved context design, or higher data quality - problems that adding agents compounds rather than solves.

Hybrid by Design

Most business workflows mix explicit logic with judgment. Treating them as one or the other creates the wrong architecture from the start.

Full automation with AI asks the agent to handle steps where deterministic logic is cheaper, faster, and more reliable. Pure deterministic automation asks rules to handle steps where judgment, ambiguity, and context matter. Both approaches fight the workflow's actual structure.

Hybrid architecture aligns with it. Deterministic systems handle what can be explicitly specified - validation, calculation, routing, transaction processing. AI handles what cannot - interpretation, synthesis, judgment calls, ambiguous inputs. Where one ends, the other begins - and that boundary is designed, not discovered.

Not every step maps cleanly - but most do. A simple test identifies the boundary: can the correct output be verified using only a checklist? Deterministic logic handles it. Does verification require reading between the lines, knowing customer history, or interpreting context? AI handles it. Does it require a calculation followed by interpretation? Deterministic logic performs the math, AI interprets the result.

The production benefit is direct. Failures trace to specific components. Deterministic steps are testable in isolation. AI steps are evaluable independently. Cost and latency reflect actual AI usage. Business changes update specific components without cascading through the entire system.

Architecture built to demonstrated requirements creates one more condition: the ability to see clearly when those requirements change.

Adaptation Design

Most AI systems are designed for launch, not for what follows it. Teams optimize for initial deployment - measurement is treated as reporting rather than infrastructure. Accuracy is tracked. Latency is monitored. Cost is observed. But the system is not instrumented to detect divergence between model performance, business value, and customer impact over time.

Adaptation design begins there. It builds the visibility required to detect when alignment drifts - before failures surface as regressions, cost overruns, or eroded trust.

Beyond AI Metrics

Tracking accuracy, latency, and cost is necessary. It is not sufficient. Three dimensions determine system health - technical performance, business value, and customer impact - and they must be interpreted together.

AI metrics indicate technical health but cannot confirm business value. A claims processing system hitting 92% routing accuracy looks healthy until you check labor hours recovered: accurate routing of simple claims means nothing if complex claims - the ones requiring most processing time - are being misrouted and corrected manually downstream.

Business metrics connect directly to the problem defined at the start - labor hours recovered, cost saved, backlog reduction, ROI timeline. These confirm whether the investment is justified, not just whether the model works.

Customer-impact metrics reveal how users actually experience the system - resolution time, satisfaction scores, complaint volume, escalation experience. A system that routes correctly but creates frustrating escalation experiences will erode trust regardless of what accuracy metrics show.

Two patterns reveal when metrics are misleading. High model accuracy alongside flat labor hours recovered signals a hidden tax - the AI handles volume but humans spend as much time reviewing and correcting outputs as they saved. Rising automation rates alongside dropping customer satisfaction signals resolution without solution - tickets close from the system's perspective while users remain unresolved. Both patterns are invisible when dimensions are tracked separately. Both become obvious when tracked together.

Build measurement across all three dimensions before scaling - not as a launch checklist, as ongoing infrastructure. This extends the data capture defined in the foundation - every AI decision, every human correction, every escalation outcome - converting each into signals across all three dimensions continuously.

Most teams build technical measurement and stop - accuracy dashboards, latency monitors, cost tracking. The business-level view never gets built. No visibility into how dimensions diverge over time. No signal when ROI trajectory shifts. No way to detect when scope assumptions no longer hold before failures surface.

That gap requires a separate layer - combined metric views surfacing divergence across dimensions, ROI trajectory tracking against original projections, boundary validity signals, business change impact visibility before changes go live.

This layer has a primary audience - business owners and product leadership making investment and scope decisions. A defined cadence - scheduled reviews, not triggered by failure - ensures the right people act before problems compound.

Adaptation is not a feature to add later. It is a design requirement.

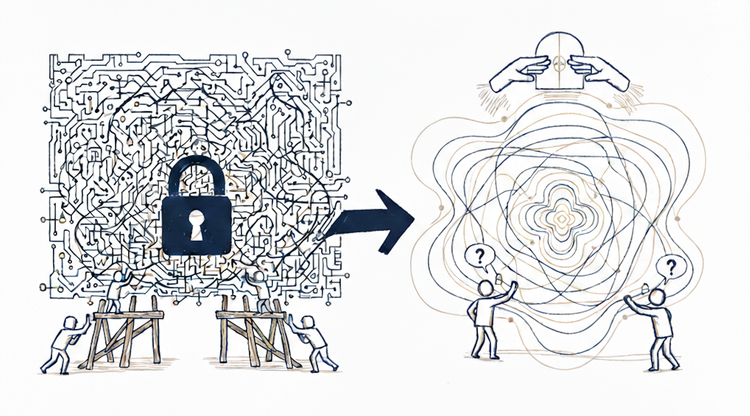

Where It Pays Off

AI systems compound exactly the way they are designed.

With disciplined scope, justified complexity, and built-in adaptation, each production cycle increases clarity instead of burden. Failures surface early. Architecture stays proportional to the task. Expansion follows demonstrated capacity, not ambition.

The payoff is organizational leverage.

Teams can predict the cost of new scope before they commit. Leadership sees ROI trends before they become surprises. Product evolves workflows without destabilizing the system. Engineering improves capability instead of containing regressions.

Most AI systems accumulate hidden coordination cost until they collapse.

Well-designed AI systems accumulate understanding instead - and that compounds.