Escaping the AI Rule Maze

A product manager decides to automate subscription upgrades.

The existing workflow is human-driven. A customer contacts support asking about upgrading mid-cycle. A support agent reviews the plan, usage, billing date, contract constraints - then anticipates consequences. Proration fairness. Customer sentiment. Revenue leakage. If uncertain, escalate to finance. Responsibility stays with the agent.

The PM sees an opportunity: replace the human step with AI.

“We will deploy an AI agent that handles upgrade requests automatically. Faster response, lower cost, 24/7 availability”.

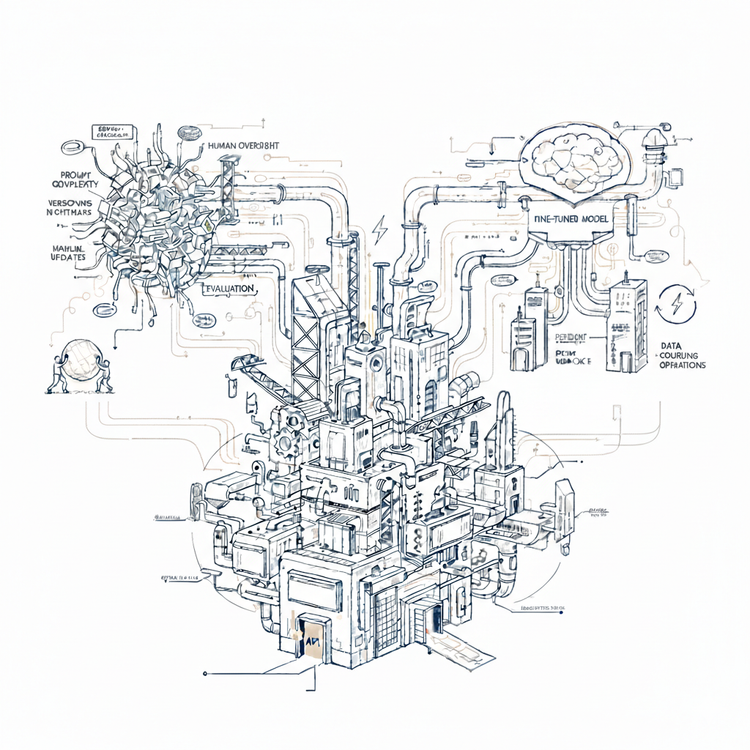

The team designs carefully. Business constraints: maximum discount thresholds, pricing bounds. Operational controls: escalation triggers, confidence scoring. Safety guardrails. Extensive testing, staged rollout, monitoring dashboards. All reasonable precautions.

The AI agent goes live.

The impact is immediate. Response times drop. Costs fall. The system handles volume.

Then:

A long-tenured customer requests a mid-cycle upgrade while holding a legacy discount no longer offered. Proration correct, thresholds respected, rules followed. Agent processes it without escalation. The team responds: add a constraint. "Flag all upgrades involving discontinued discounts for human review".

Two days later, the agent denies an upgrade that should have processed. The customer had two active promotions - one legacy, one current. The rule triggered on "legacy discount" without checking if standard terms still applied. New constraint: "Process upgrades with current discounts only".

Week later, enterprise contracts with custom terms. But these need finance judgment. Now team has to setup a "review queue" for finance.

Usage patterns shift post-launch. The agent recommends higher-value upgrades without modeling downstream consequences. Weeks later, finance notices average proration crept up 8%. Not catastrophic, but enough to trigger requests for tighter controls. Another constraint: "Cap total discount at 15% for any upgrade".

...

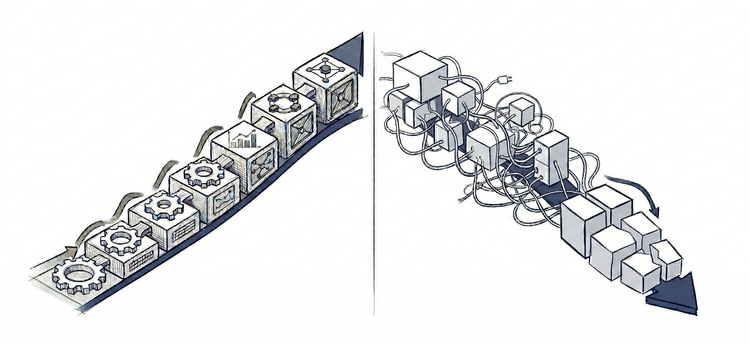

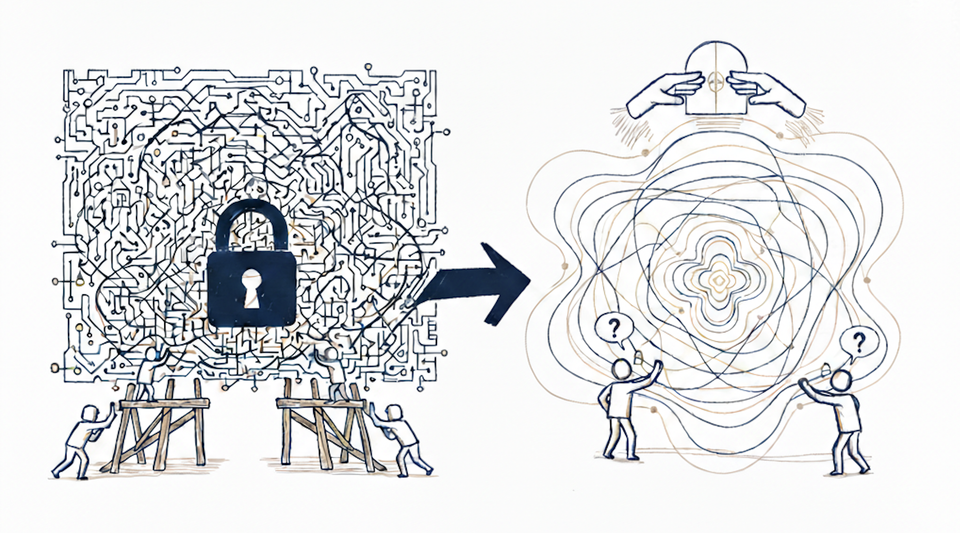

Three months in, the agent operates inside an expanding maze of rules. Each rule narrows what the AI can handle autonomously. The team maintains two systems now: the agent's learned behavior and an expanding layer of override constraints - both must remain synchronized.

The PM designed for control. Expected automation. Set-and-forget.

Reality delivered coordination.

The Divergence

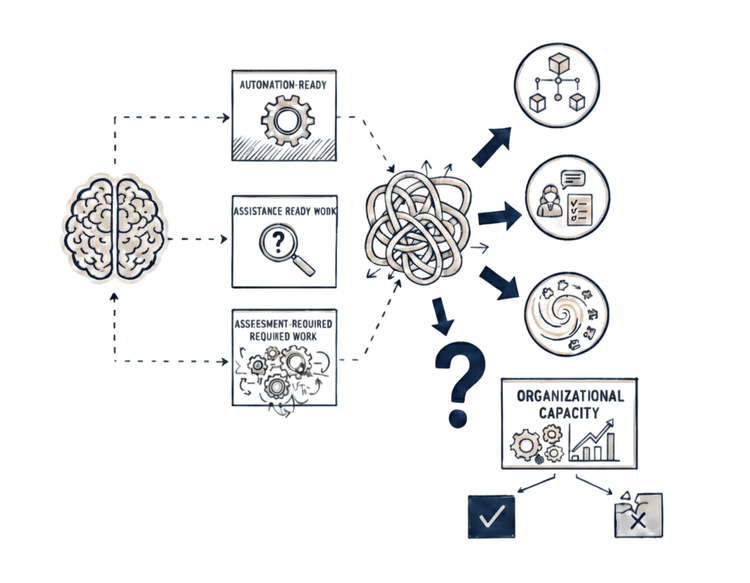

A different team deployed the AI agent for standard tier upgrades only. Clear boundaries. Consistent patterns. Light supervision. When edge cases appear - legacy discounts, enterprise contracts - these escalate by design, not by accumulated exception.

As the agent proves reliable within those boundaries, they expand: mid-tier upgrades. Boundaries adjusted based on: supervision time, retraining frequency, drift management, escalation volume.

Six months in, the agent handles 70% of upgrades. The remaining 30% - complex contracts, precedent cases, strategic accounts - stay human. No expanding rule maze. No synchronization burden.

They designed for coordination from the start.

Reality matched expectations.

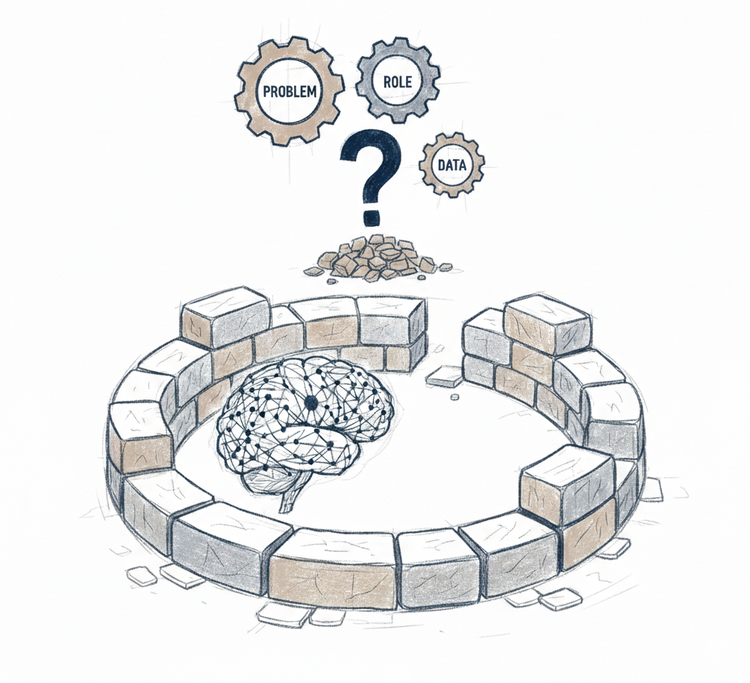

The Recognition Gap

AI does not fail. Operating models do.

The first team asked: "Can AI handle subscription upgrades?".

Answer: yes, with constraints. They designed rules, set thresholds, deployed for coverage. When coordination burden emerged, they fought it with more rules. Then prompt engineering. Then validation layers. Then drift monitoring. Then human fallbacks. Each addition meant more engineering to manage the AI that replaced the humans.

The second team asked: "Where does coordination stay viable?".

Answer: within boundaries where supervision, retraining, and drift management remain affordable. They designed for a subset, proved reliability, expanded deliberately.

Same AI. Same task. Different questions. Different outcomes.

Control-paradigm thinking optimizes for execution coverage. Coordination-paradigm thinking optimizes for sustainable boundaries. The first deploys, then discovers coordination burden. The second designs for coordination burden, then deploys.

Recognition enables design. Design determines placement. Placement determines whether supervision stays affordable, drift detectable, boundaries stable.

Deploy AI where coordination was recognized and designed for. That is leverage. Everywhere else, scaffolding becomes more expensive and less reliable than what it replaced.