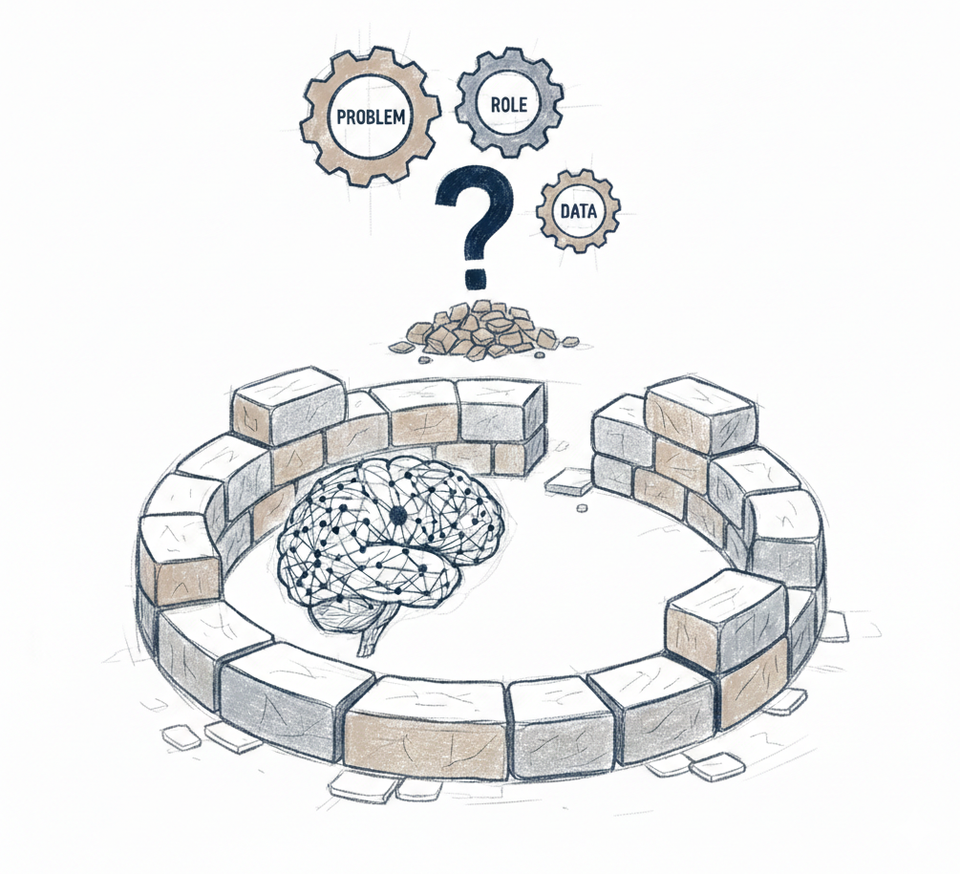

From Placement to Foundation: Designing AI for Production

Viable placement is not enough. Many AI deployments that should work, do not. The problem is never the AI model - it is the design work that precedes building.

Placement evaluation identifies where AI belongs. It does not determine what happens there.

What follows is a design framework that determines whether implementation has a foundation to build on.

The Problem

Many AI initiatives start with "Use AI to process insurance claims", "Use AI to replace agents in customer support", etc.

These are directions. They are not problems.

Teams that skip clear problem definition build in the wrong direction. Vague scope means shifting requirements, discarded features, ungrounded decisions. Production reveals the gap - teams cannot agree if it works, leadership questions ROI, funding gets pulled because no one can articulate what problem was solved.

A clear problem definition is complete, measurable, and bounded. It answers: what exactly needs solving, how much of it exists, what changes if solved, what limits the solution, what success means.

This ensures AI capabilities align with business objectives - serving defined outcomes, not showcasing impressive features for their own sake.

Here is what that looks like in practice:

Specific work: Insurance claims team receives 1,200 property damage claims monthly. Each requires initial review - reading submissions, checking policy coverage, categorizing damage, routing to appropriate adjusters. Takes ~15 minutes per claim.

Business problem: Processors spend 300 hours monthly ($12,000 in labor) on routine initial review that follows predictable patterns in 80% of cases, creating 2-3 day backlog before claims reach adjusters.

Impact: Extended claim resolution time. Customer frustration requiring status updates. Processor capacity consumed by categorization instead of complex edge cases requiring judgment.

Constraints: Regulatory compliance requires manual review for fraud indicators, high-value claims over $50k, and specific policy exclusions. Misrouting accuracy threshold - routing errors delay resolution further. Implementation must show ROI within 6 months.

Success criteria: Automate 80% of straightforward claims, reduce initial review from 2-3 days to same-day, maintain 90% routing accuracy, recover 200 processor-hours monthly.

Clear problem definition changes everything downstream. Teams can evaluate ROI before investing. Engineering knows what data matters, which models fit capability and cost requirements, what infrastructure handles the volume and latency constraints. Product can scope realistically. Leadership can recognize success or failure definitively. Every decision - from model selection to evaluation frameworks - has a clear anchor point. Without it, every decision is negotiable.

Most AI failures are not model failures - they are undefined-problem failures. When teams cannot clearly articulate what is being solved, how much of it exists, and what success looks like, performance becomes subjective. The AI is blamed for outcomes that were never structurally anchored.

The framework that creates this structure:

- Identify the specific workflow or task. Not "customer support" but "password reset requests" or "initial claims review" or "subscription upgrade processing".

- Quantify current state. Volume handled, time per task, labor cost, delays created. Numbers matter - they establish the baseline.

- Specify business impact. Financial cost, operational bottleneck, customer friction. Connect the task to outcomes leadership cares about.

- Document constraints. Regulatory requirements, accuracy thresholds, budget limits, timeline expectations. These bound what is viable.

- Set measurable success criteria. What changes, by how much, when measured how. "Reduce initial review time from 2-3 days to same-day" not "improve efficiency".

This foundation enables solution design. AI's role, scope boundaries, architectural choices, evaluation frameworks - all build from these criteria.

The AI's Role

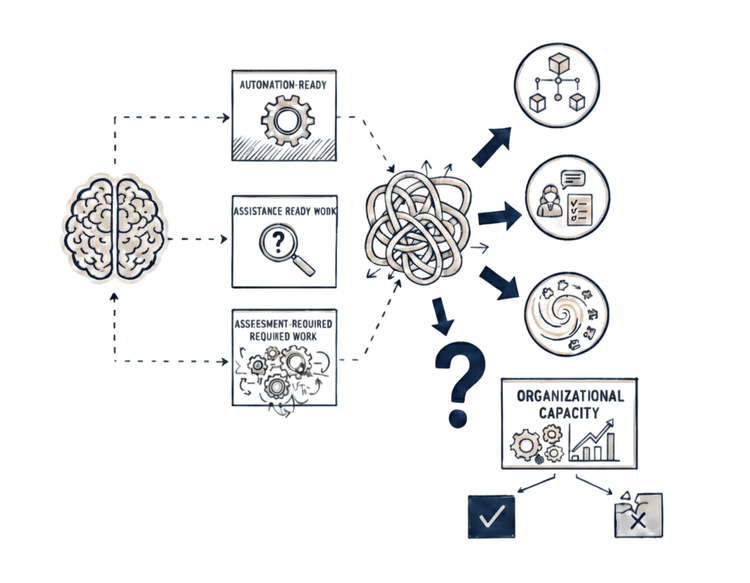

Teams often treat role design as an implementation artifact. It is not. It is a product decision.

The boundary between automation and augmentation determines accountability, risk exposure, operational burden, and long-term sustainability. If that boundary is unclear, engineering cannot compensate for it.

AI's role bridges problem and implementation - defining how AI participates in solving the problem, where boundaries exist, and what structure makes that participation viable.

Participation modes

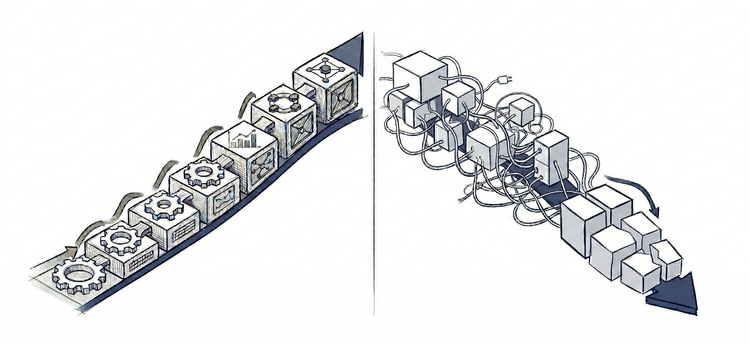

Map the work to two categories based on structural characteristics.

Full automation: Routine, predictable patterns where AI's capabilities match task requirements. Low-stakes outcomes where errors are containable. Clear success criteria that AI can optimize toward. No consequence-grounded judgment required.

Augmentation: AI provides analysis, recommendations, synthesis. Humans own the decision. Use when judgment matters but AI can surface insights humans cannot generate at scale. Task requires weighing tradeoffs AI cannot fully model. Accountability must stay human.

For claims processing:

AI automates routine categorization and routing for 80% of straightforward claims - vehicle accidents, water damage, roof damage, etc. Predictable patterns, containable errors, clear success criteria.

AI augments complex multi-damage claims - multiple vehicles in accident, fire + water damage, etc. Provides category suggestions and flags ambiguity, but humans decide given judgment requirements.

High-value claims over $50k, fraud indicators, precedent-setting decisions start outside AI's scope.

HITL structure

Define where humans stay in the loop and why. Not just "AI escalates uncertainty" but explicit design of escalation triggers, handoff mechanics, and feedback loops.

Escalation criteria: What triggers handoff from AI to human. Case characteristics that exceed AI's reliable boundaries. Customer segments requiring special handling. Compliance requirements. Uncertainty thresholds combined with context.

Handoff design: What context humans receive when AI escalates. What analysis AI provides to inform human decisions. How human corrections flow back to improve AI boundaries over time. Explicit design of the transition point between AI and human work.

Feedback loops: How human decisions improve AI boundaries over time. Which corrections get captured and how. What patterns trigger boundary expansion reviews. How validated changes feed back into the system - through context updates, prompt refinements, or boundary modifications.

For claims processing:

Escalation triggers: Claims over $50k automatically route to senior processors regardless of AI confidence. Uncertainty above 0.7 combined with enterprise customer segment requires human review.

Handoff mechanics: Processor receives: AI-suggested category with confidence percentage, three most similar historical claims with outcomes, relevant policy sections highlighted in context, explicit flag showing why it escalated (threshold exceeded, fraud pattern detected, novel scenario).

Feedback loops: When human processors override the same AI category assignment three or more times in similar scenarios, the pattern flags for review and potential automation boundary adjustment. Validated overrides feed the next update cycle - informing context adjustments, prompt refinements, or boundary modifications.

Validating the Role

Before proceeding, ask yourself three questions about the role you have defined:

- Can you articulate why each decision belongs in its category based on task characteristics, not AI capability?

Routine claims are automated because patterns are predictable (damage types match historical data), stakes are containable (routing errors can be corrected), and success is measurable (90% routing accuracy target). Not because AI can technically do it. - Does the design account for edge cases and allow boundaries to evolve?

Novel damage types escalate automatically rather than forcing new unreliable automation. Feedback loops identify expansion patterns - where processors consistently agree with AI. These become expansion candidates. - Are escalation volumes sustainable?

80% automation leaves 20% for human review - about ~60 hours monthly, sustainable with current capacity. High-risk cases (fraud, high-value, novel) reach humans while automation handles volume.

If you cannot answer these clearly, the role definition needs refinement. This design informs every architectural decision that follows.

Data Strategy

Role design determines how AI participates. Data strategy determines whether that participation is executable and whether it can improve over time.

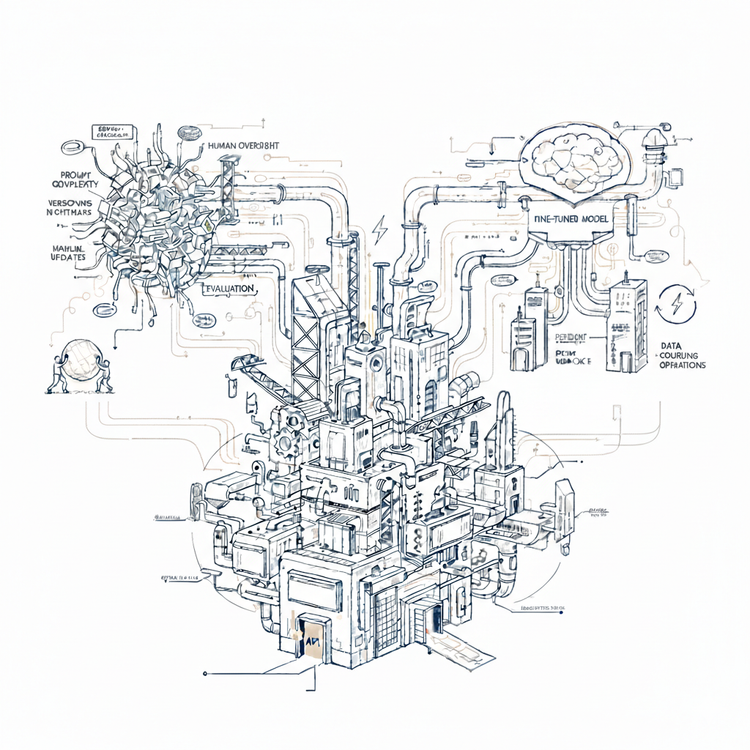

Most teams deploying LLMs today are not training or fine-tuning models. They are working with foundation models and shaping performance through context - what the model sees at inference time, what examples it draws from, what organizational knowledge it can retrieve. Data strategy in this environment is not about building training datasets. It is about what data makes the model reliable from day one, and what gets captured in production to enable measurement and improvement.

Data for inference

Foundation models arrive with broad capability. What they lack is your context - the organizational data and business specifics that make performance reliable within defined boundaries.

Three types of data matter before deployment:

Historical cases for retrieval. Similar past decisions with known outcomes give the model relevant context when it encounters ambiguous inputs. These are not training examples - they are reference points the model reasons against at inference time. Coverage matters: historical cases should span the full variation the model will encounter in production, including boundary cases near escalation thresholds, not just clear-cut ones.

Policy and constraint documentation. The model needs explicit access to what governs decisions - rules, thresholds, regulatory requirements, documented exceptions. Structured and retrievable, not buried in tribal knowledge or informal practice. If this documentation does not exist, it needs to be created before deployment - not discovered through production failures.

Labeled examples for prompts. A small set of high-quality examples - input, correct output, reasoning - embedded in prompts anchors model behavior to organizational standards. These demonstrate what good looks like, specific enough to constrain outputs toward the patterns that matter.

Readiness check: can you retrieve similar historical cases reliably, is policy documentation structured enough to surface relevant constraints, and do labeled examples exist across the variation the model will encounter - including edge cases?

Data from production

Inference data makes the model reliable at launch. Production data makes it improvable over time.

Every decision the model makes is a signal. Every human correction is a stronger one. Without deliberate capture, these signals disappear - performance drifts invisibly, boundary adjustments happen reactively, and improvement cycles have nothing to run on.

Three things get captured systematically:

Every AI decision: full input and output, including but not limited to: prompt, context retrieved, reasoning chain, confidence score, escalation trigger if applicable. This is the minimum observability layer - it enables tracing any decision with full visibility into what the model had available and what it decided.

Every human correction: when humans override AI outputs, the correction is captured with full context - what the AI produced, what prompt and context it was working with, and what the human changed it to. The "based on what" matters as much as the override itself - it reveals whether the problem is prompt, wrong context, or the model itself.

Every escalation outcome: which trigger fired, what context the AI had, the AI's suggestion, the human decision, what changed, and time to resolution. Patterns inform boundary decisions in both directions - consistent human agreement with AI suggestions signals expansion candidates, consistent disagreement in previously reliable territory signals shrinkage - data drift, policy change, or shifting patterns the model no longer handles reliably.

This maps directly to the feedback loops designed in AI's Role. The override threshold that flags boundary adjustment only works if corrections are captured with full context. Boundary expansion and shrinkage candidates only emerge if escalation outcomes are systematically recorded. Production data is not a separate function - it is what makes those loops executable rather than theoretical.

What This Means

Inference data confirms the role defined in AI's Role is executable from day one. Production data ensures it can improve. Together they convert role design from concept into a system that can be measured and adjusted over time.

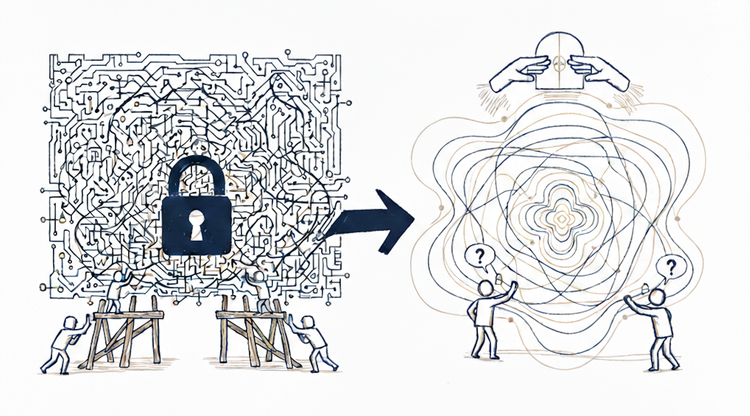

The Foundation

Most AI implementations are designed in production - through failures, accumulated fixes, and reactive engineering. Problem definition gets revisited when teams cannot agree if it works. AI's role gets clarified when boundaries blur. Data strategy gets built when improvement cycles have nothing to run on.

This framework moves that design work to where it belongs - before building starts, when decisions are still cheap and foundations can still be laid.