The Invisible Work AI Reveals

Three months after deploying their AI support agent, the product manager sits in a retrospective nobody wanted to schedule.

The numbers still look good. Response times down 60%. Volume up 3x. Customer satisfaction holding.

But the system is breaking.

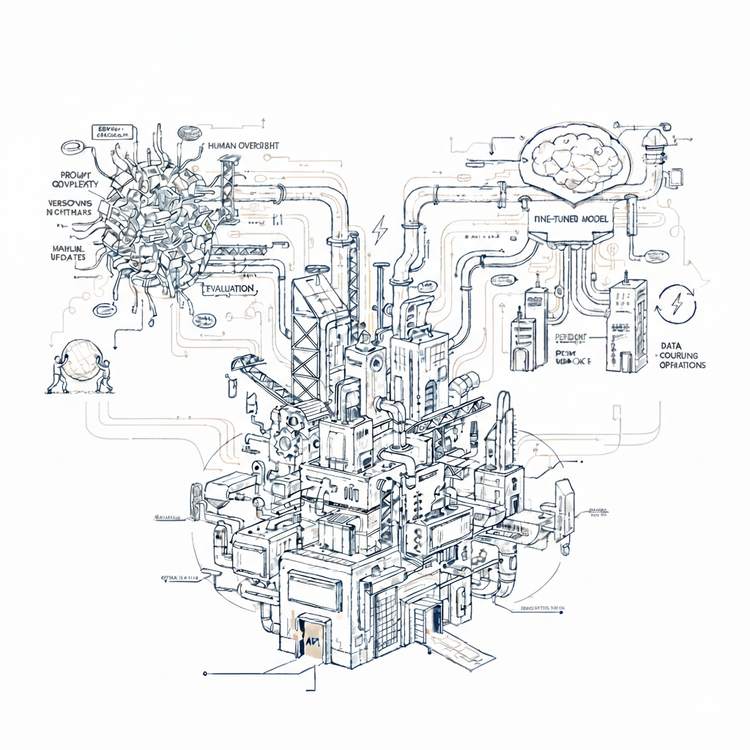

Engineering maintains 47 distinct rules governing the AI agent - split across prompts, few-shot examples, and validation layers. Up from 9 at launch. Each rule interacts with others in ways no one fully tracks. Last week, a routine constraint adjustment blocked all promotional upgrades for six hours before anyone noticed.

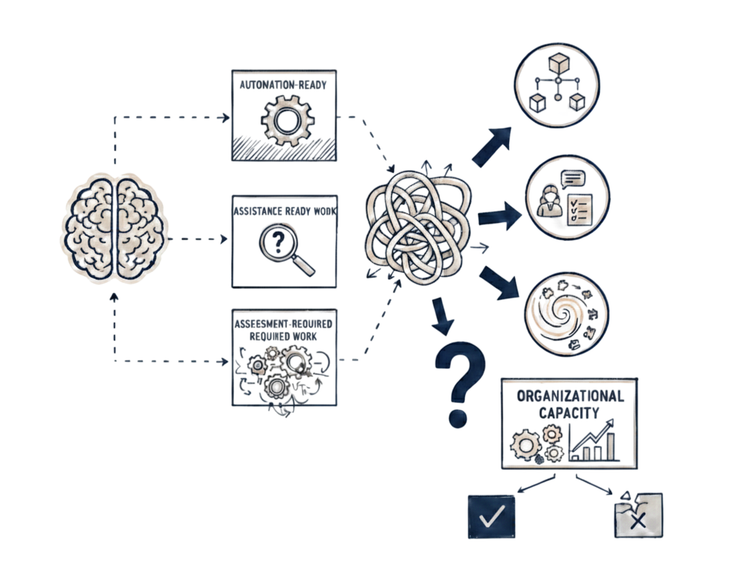

Operations runs three different review queues now - standard, enterprise, and "AI anomaly" - each requiring human approval before the AI's decision executes. Review times for escalated cases exceed what manual processing averaged before deployment.

Finance escalated last month's discounting errors - $47K in unexpected revenue impact. Leadership demanded accountability. Engineering pointed to Product. Product pointed to Engineering. Operations pointed to both. No one owned it.

And business changes are coming - new enterprise tiers, revised discount structures, promotional campaigns for dynamic pricing. Six weeks out.

The team looks at their brittle system, then at the changes approaching. Updating it will require touching dozens of interconnected rules, revalidating every interaction, testing edge cases no one documented, coordinating across three teams who can barely keep up now.

The current system barely works. The future system will not.

Operations wants to revert to manual processing. Leadership is questioning the entire AI strategy.

The PM remembers the launch. Clean deployment. Early results exceeded expectations. Leadership celebrated. The team was confident.

Now they are deciding whether to shut it down.

The Invisible Work

The question everyone keeps asking: what went wrong?

The PM reviews the original idea. Replace human agents with AI. Faster response, lower cost, 24/7 availability. Design for what the AI would do - process upgrade requests, check constraints, apply discounts, update accounts.

They designed for execution. That was the miss.

When support agents handled upgrades, they executed tasks - but they also did something else. Something the team never explicitly designed for because it operated mostly invisibly.

A customer support agent sees an enterprise account requesting an upgrade. The discount comes to 25% - higher than the standard 20% enterprise tier. The contract mentions "promotional pricing up to 30%". Technically within bounds.

The agent pauses.

Not because a rule tells them to pause. Because they recognize uncertainty. The agent checks documentation, search the internal wiki - no precedent for discounts above 20% on promotional terms. The parameters technically allow it, but they have never processed a discount this high on a legacy contract before. Agent knows - mistakes on enterprise accounts reach c-level.

The agent escalates to finance: "Request appears valid but outside my experience. Want confirmation before processing".

Another agent sees an upgrade request from basic to premium tier. Straightforward - process immediately, charge the difference. Technically correct. But they notice: customer signed up three days ago, still receiving onboarding emails.

The agent delays processing for 24 hours and sends a message: "Saw your upgrade request. Just want to confirm - you're still in your first week trial, so if you wait four days you can evaluate premium features before committing to the upgrade".

Processing immediately is valid. But it creates regret-based cancellations and support burden later. The agent sees past immediate execution to downstream consequence.

...

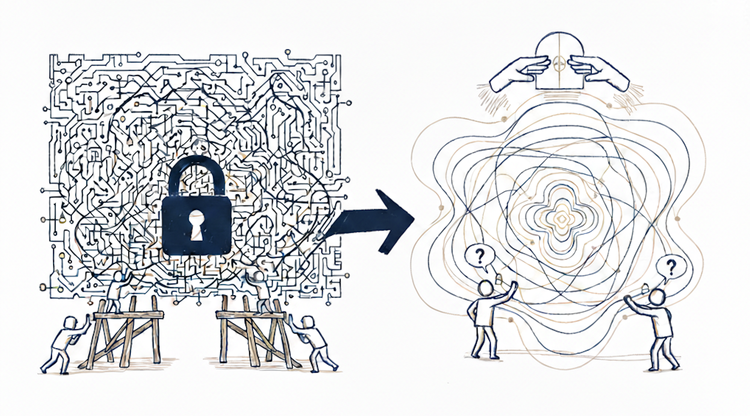

Both agents coordinate. They recognize what they do not know, anticipate what matters beyond the immediate task, adjust based on context that was not explicitly specified.

Consequence-Grounded Judgment

The week after the AI agent launched, both scenarios appeared.

Enterprise account, 27% discount, within contract bounds. The AI processed it. No pause. No recognition of uncertainty. No escalation.

Two weeks later, Finance flagged it - unexpected revenue impact, precedent set for other enterprise accounts. Leadership wanted to know why it was approved.

Trial period customer requesting immediate upgrade. Technically valid. The AI processed it. Customer charged, upgrade confirmed.

Four days later, the customer canceled. Support ticket: "Realized I could have tried premium features in my trial first. Frustrated I paid when I didn't need to".

The team's response: add constraints.

"Flag all enterprise upgrades above 20% discount for human review."

"Delay upgrades for accounts less than 7 days old."

The first rule caught too many routine cases - enterprise accounts with legitimate discounts now required manual approval. They refined it: "Flag enterprise upgrades above 20% discount on legacy contracts for human review."

Better. But now it missed combinations that still needed judgment - current contracts with custom payment terms, mid-market accounts miscategorized as enterprise. The second rule also blocked legitimate urgent upgrades while missing the actual signal. Account age was proxy for onboarding status, but not all new accounts were in trial, and some older accounts extended their trials.

More scenarios appeared. Each became another rule. Or existing rules became more complicated to handle new combinations.

Meanwhile, business evolved. Marketing launched promotional campaigns. The enterprise definition expanded to include mid-market accounts with annual contracts.

The enterprise discount rules - did they apply to mid-market now? The original rules did not distinguish. Promotional campaign rules handled new promotions OR legacy contracts, not promotional campaigns applied to legacy contracts. Combinations the rules never anticipated. New edge cases emerged - now involving new business logic interacting with old patterns.

Rules and constraints kept accumulating - across prompts, few-shot examples, validation layers, edge case exceptions. Each change risked breaking what already worked. Every update required validating the entire system.

...

What human agents handled naturally - adjusting judgment as context evolved - became reactive patching that could not keep pace.

More and more rules and constraints now attempted to capture what human judgment provided automatically. Knowing what you do not know. Anticipating what matters beyond immediate execution. Adapting as context evolves.

The team was trying to specify something that emerged from consequence, not from specification.