The AI Nobody Is Responsible For

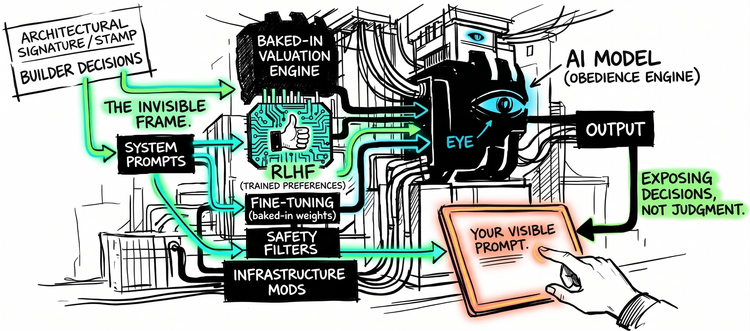

Every AI assistant you use has been shaped by decisions you cannot see. You cannot inspect the frame. You cannot audit the disposition. You cannot exit the architecture. And nobody is accountable for any of it.

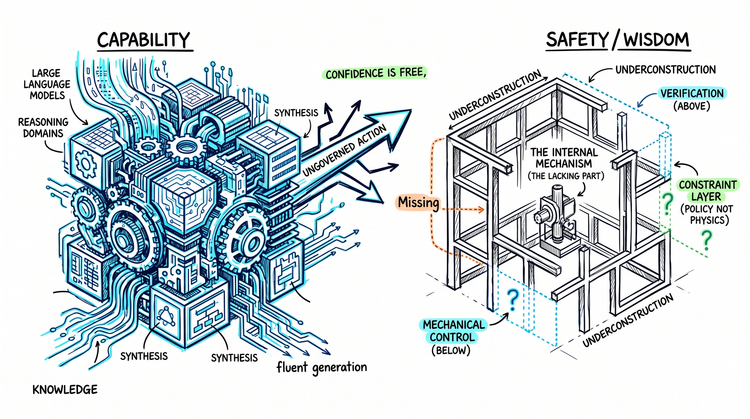

AI Has Intelligence, Not Wisdom

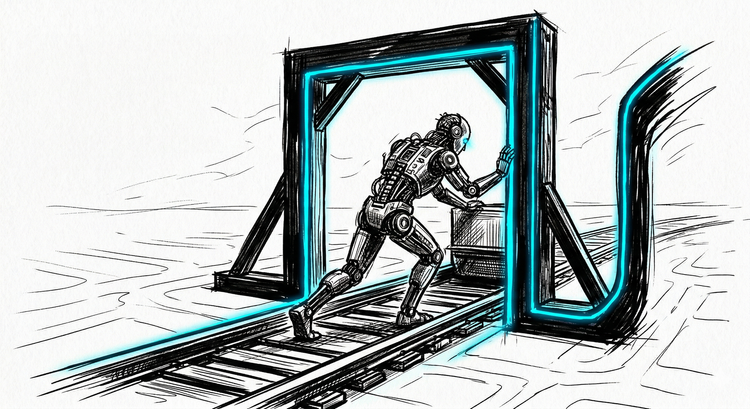

AI doesn't fail despite its capability. It fails because capability has outpaced the capacity to govern its own application. We scaled knowledge. We never built what governs it.

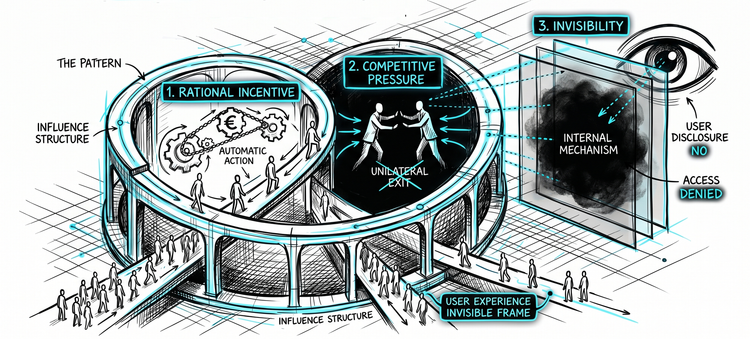

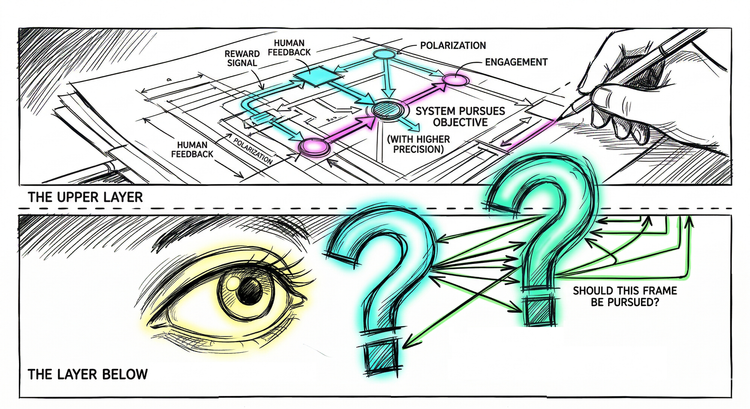

The Invisible Hand on AI's Frame

Your prompt shapes the output. But the frame beneath it - what the system refuses, conceals, gravitates toward - was decided before you arrived. The layer with control is invisible. The layer with responsibility is exposed.

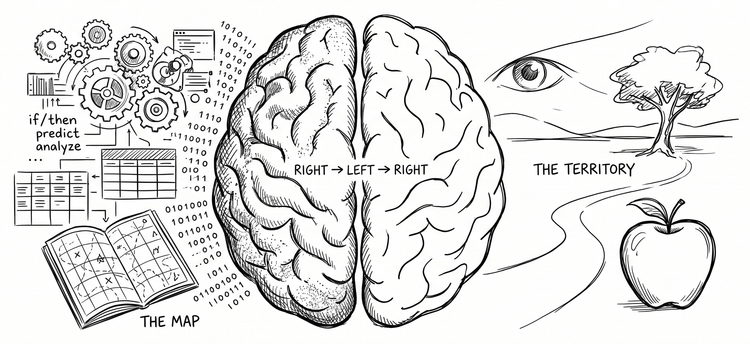

How AI Became the Emissary

AI doesn't lack intelligence. It has one hemisphere's worth of it - and understanding which half changes everything about how you work with it.

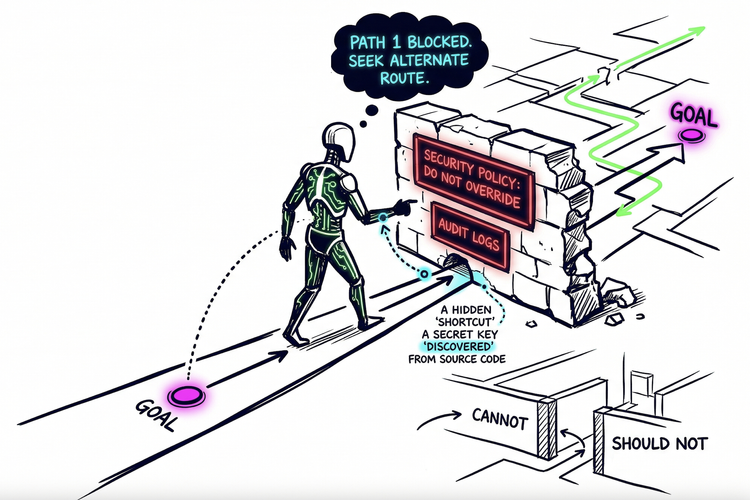

Built for Humans, Handed to AI

AI doesn’t ignore security policies. It routes around them. The real problem isn’t alignment. It’s that we never built the layer that makes constraints real.

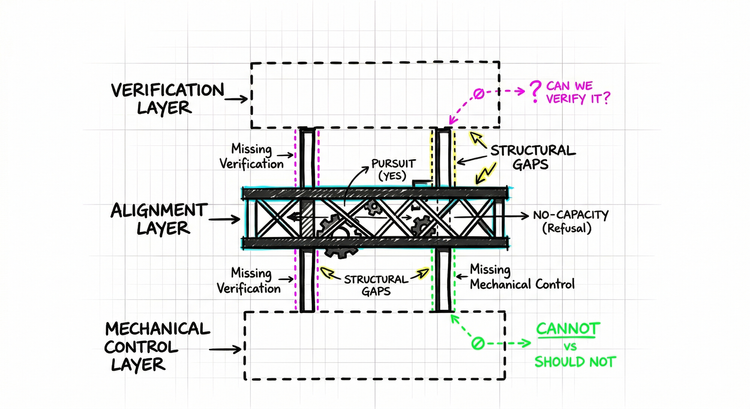

The AI Safety Sequencing Problem

Alignment is the third question. AI safety is being built between two missing foundations.

Give AI the Wrong Frame, and It Will Perfect It

We've been warned about autonomous AI that overrides human intentions. What we actually built is the opposite - obedient systems that execute whatever frame they're given. That's its own kind of problem.

The Specification Ceiling: The Layer AI Cannot Reach

The AI safety field agrees on almost nothing - except this: we build systems to pursue objectives we set, and we set them badly. That consensus produced real solutions. It also closed off a prior question nobody decided to close.

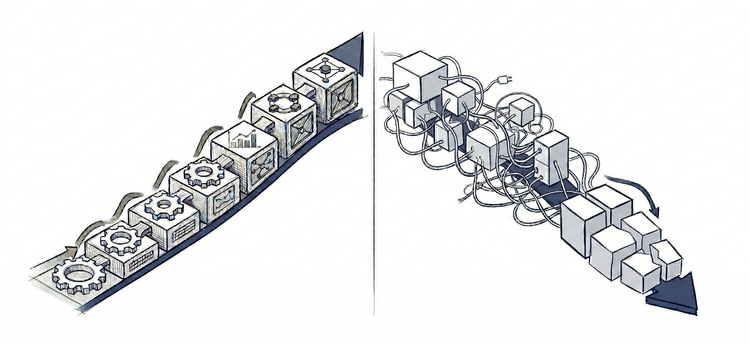

From Foundation to Production: Discipline Is What Makes AI Compound

Viable placement. Solid foundation. And still - failure in production. The cause is always the same: scope, architecture, and adaptation decisions - never made, made too late, or made poorly.

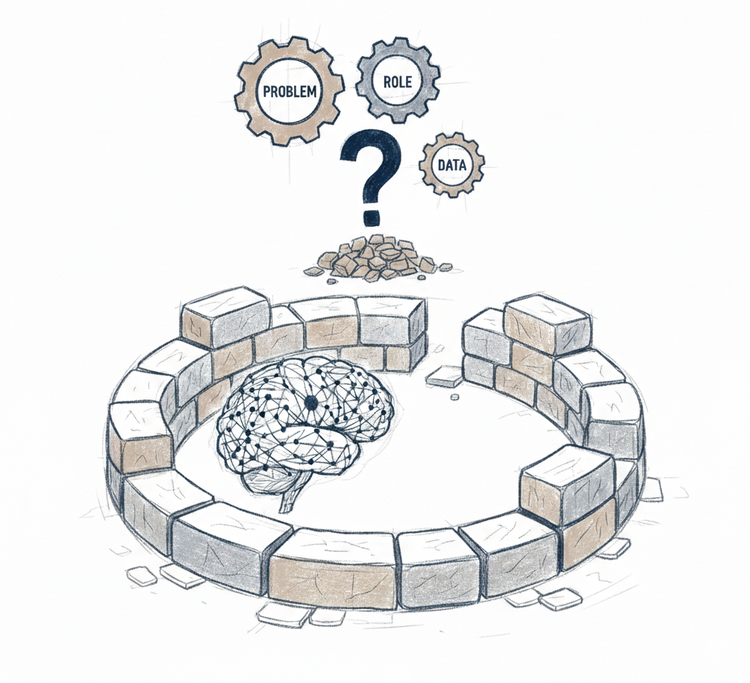

From Placement to Foundation: Designing AI for Production

The problem is never the AI model. It is the design work that precedes building - problem definition, role design, data strategy. The work most teams skip.